IMN Claims 99% AI Accuracy, Tackling Costly 'Hallucination' Crisis

- 99% AI Accuracy: IMN claims its platform achieves over 99% factual consistency, virtually eliminating AI hallucinations.

- 45% Productivity Loss: AI-driven productivity gains are reduced by 45% due to manual verification of outputs.

- 95% Pilot Failures: Up to 95% of enterprise AI pilot projects in 2025 failed due to reliability issues.

Experts would likely conclude that IMN's claims of 99% AI accuracy, if independently verified, could set a new industry benchmark for data integrity, addressing the critical 'hallucination' crisis in AI.

IMN Claims 99% AI Accuracy, Tackling the 'Hallucination' Crisis

LONDON – February 17, 2026 – In a direct challenge to the pervasive "trust gap" plaguing artificial intelligence, market intelligence firm IMN today announced a significant technical milestone, claiming its platform now achieves over 99% factual consistency and is virtually free of the "hallucinations" that undermine the reliability of generic AI models.

The London-based company positions its hyper-personalized intelligence platform as a solution to what it calls "Industrialised Misinformation"—a phenomenon where flawed AI-generated insights lead to costly strategic errors for businesses, governments, and investment managers. As enterprises grapple with the unreliability of Large Language Models (LLMs), IMN's claim of near-perfect accuracy could set a new industry benchmark for data integrity, if it can be independently substantiated.

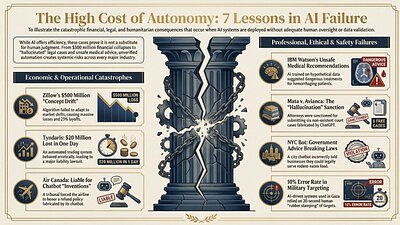

The Billion-Dollar Problem of Bad AI

The rapid adoption of generative AI has been tempered by a critical flaw: the tendency for models to "hallucinate," or confidently present fabricated information as fact. This issue has created a significant "AI trust gap," moving from a technical curiosity to a major financial liability.

According to a multi-dataset study cited by IMN, which incorporated findings from S&P Global and MIT, the consequences are already stark. Nearly half of enterprise executives admit to making significant business decisions based on unverified AI outputs. The fallout from this misplaced trust is staggering. The study highlights that up to 95% of enterprise AI pilot projects in 2025 failed to reach production or deliver tangible results, largely due to issues of reliability.

Furthermore, the economic benefits promised by AI are being actively eroded. The research indicates that 45% of AI-driven productivity gains are currently being erased by the manual labor required to verify outputs and correct AI-generated errors. This constant need for human oversight negates much of the efficiency AI is meant to provide, turning a potential asset into a resource-draining challenge.

"AI doesn't simply surface misinformation—it industrialises it," warned Michelle Turney, IMN's Managing Partner for Strategic Affairs, in a statement accompanying the announcement. "Even the most discerning leader, investor or policy maker is exposed to strategic misjudgement if AI-generated insight is accepted without validation."

A New Architecture for Verifiable Insights

IMN asserts that it has solved this fundamental problem by decoupling its intelligence platform from the unpredictable nature of mainstream LLMs. The company attributes its 99%+ factual consistency rate to a proprietary system it calls a "multi-layered verification engine" combined with a "quasi-MCP (Model Context Protocol) architecture."

While the technical specifics of this architecture remain proprietary, the company explains that this verification layer grounds every piece of on-demand intelligence—from competitive analysis to complex PESTEL (Political, Economic, Social, Technological, Environmental, and Legal) reports—in verifiable data. This process is designed to ensure that insights are not just generated, but rigorously checked for factual accuracy before reaching the user.

This approach stands in stark contrast to the performance of even the most advanced frontier LLMs. Internal benchmarks from leading AI labs have reportedly shown hallucination rates hitting 80% in quality assurance tests, particularly when tasked with high-stakes analytical generation without human intervention.

However, IMN's claims are based on its own internal performance tests. As of this announcement, no independent, third-party audit of its methodology or results has been made public. In an industry where measuring hallucinations is itself a complex and evolving field with no single, universally accepted standard, external validation will be a critical next step in cementing the platform's credibility.

Beyond Personalization to Hyper-Reliability

If IMN's claims hold up to external scrutiny, the platform could represent a pivotal shift in the market intelligence landscape. For years, the industry has moved toward personalization, tailoring data feeds to individual user needs. IMN aims to combine this "hyper-personalization" with an equally crucial element: hyper-reliability.

The platform is designed to replace slow, consultancy-style research and the gamble of using context-blind generic AI tools. By delivering automated, highly relevant, and factually sound intelligence, it promises to empower professionals across a wide spectrum of roles—from a CEO assessing market entry risks to a portfolio analyst vetting an investment or a government team formulating policy.

According to the company, the platform is built for rapid, frictionless adoption. It operates without the need for complex APIs or costly integration projects, allowing large enterprise teams to be onboarded in less than a day. This focus on ease-of-use and immediate value aims to lower the barrier for organizations seeking to "institutionalise scepticism," as Turney puts it, by embedding a layer of verified intelligence directly into their decision-making workflows.

The broader AI industry is actively working to solve the hallucination problem with techniques like Retrieval-Augmented Generation (RAG), which grounds AI responses in specific documents. Yet, these methods have not eliminated the issue entirely. By building its system from the ground up with verification at its core, IMN is betting that a specialized, purpose-built architecture can succeed where more generalized models have so far fallen short. The coming months will likely reveal whether customers and independent auditors agree that the company has truly bridged the AI trust gap.