The Human Firewall: Why Deepfakes Are Redefining Cybersecurity

- $25.6 million: Amount lost in a single deepfake-driven fraud incident in early 2024.

- 3,000% increase: Surge in deepfake fraud attempts in 2023.

- 24.5% accuracy: Human ability to detect high-quality deepfake videos.

Experts emphasize that deepfake attacks exploit human psychology, requiring organizations to adopt a holistic defense strategy that integrates people, processes, and technology to mitigate risks effectively.

The Human Firewall: Why Deepfakes Are Redefining Cybersecurity

By Jessica Campbell

ARLINGTON, Va. – March 26, 2026 – In early 2024, a finance employee at a multinational firm received an email from their UK-based CFO summoning them to a video conference. On the call, the employee saw and heard familiar faces—the CFO and other colleagues—discussing the need for a secret, time-sensitive transaction. Following their instructions, the worker transferred $25.6 million to the designated accounts. The problem? None of the colleagues on the call were real. They were all AI-generated deepfakes. The money was gone.

This incident is not a futuristic hypothetical; it is a stark example of a new and rapidly escalating class of cyberattack that targets an organization's most vulnerable asset: human trust. As artificial intelligence becomes more sophisticated and accessible, deepfake attacks are surging, leaving businesses exposed to massive financial fraud, data theft, and catastrophic reputational damage. A new report from global research and advisory firm Info-Tech Research Group, titled "Defend Against Deepfake Cyberattacks," confirms that traditional security measures are no longer enough, urging a fundamental shift in how organizations approach defense.

The New Front Line: Hacking Human Psychology

For decades, cybersecurity has focused on building taller walls—stronger firewalls, smarter antivirus software, and more complex encryption. Deepfakes render much of this Maginot Line obsolete by simply walking through the front door. They don't exploit code; they exploit cognition. By impersonating trusted figures like executives, colleagues, or vendors with terrifying accuracy, these attacks leverage powerful psychological cues of authority and urgency.

Recent data reveals the alarming effectiveness of this strategy. Fraud attempts using deepfake technology skyrocketed by 3,000% in 2023, and by 2024, it was estimated a deepfake attack occurred every five minutes. The financial toll is staggering, with businesses facing average losses nearing $500,000 per incident. The core issue is that humans are fundamentally ill-equipped to detect these forgeries. Studies show that people can identify high-quality deepfake videos with an accuracy rate as low as 24.5%, meaning we are three times more likely to be fooled than not.

This reality forces a difficult conclusion: technology alone cannot solve a problem rooted in human behavior. Info-Tech's research emphasizes that detection tools, while emerging, are not a silver bullet.

"Existing security frameworks acknowledge deepfakes but provide little practical direction," says Alexander Toti, a research analyst at Info-Tech Research Group. "Detection tools are emerging, but they are inconsistent and cannot serve as a silver bullet. Organizations need to understand where they are most exposed, which processes, individuals, and transactions are vulnerable, and what the impact would be if a deepfake succeeds."

The Anatomy of an AI-Powered Attack

The threat is not monolithic. Criminals are deploying deepfakes across a variety of vectors, each designed to exploit different organizational weak points:

Executive Impersonation & Vishing: This is the most common form, often called "CEO fraud." Using voice-cloning AI that can create a convincing replica from just a few seconds of audio scraped from a podcast or earnings call, attackers call finance departments demanding urgent, confidential wire transfers.

Sophisticated Video Scams: As seen in the Hong Kong case, attackers are now staging multi-participant video calls to overwhelm a target's skepticism. These attacks require more resources but have a devastatingly high success rate for large-sum fraud.

Identity Verification Bypass: Deepfakes are being used to fool the automated Know Your Customer (KYC) and identity verification systems used by banks and other services. By using a deepfake video of a legitimate person during a liveness check, fraudsters can open accounts or authorize transactions illicitly.

Hyper-Realistic Phishing: Instead of generic scam emails, attackers can use deepfake audio or video snippets in highly personalized spear-phishing campaigns to manipulate employees into divulging credentials or other sensitive data.

Despite these clear and present dangers, corporate preparedness remains dangerously low. Surveys indicate that a significant portion of business leaders are not fully familiar with the technology, and only 13% of organizations report having any anti-deepfake protocols in place. This gap between the threat and the readiness to combat it is where firms like Info-Tech are focusing their efforts.

From Reactive Panic to Proactive Resilience

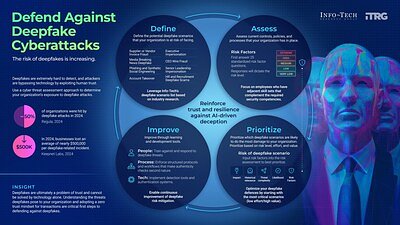

To counter a threat that blends technology and psychology, organizations must adopt a holistic defense strategy that integrates people, processes, and technology. The Info-Tech Research Group blueprint outlines a practical, four-step framework to move from a reactive posture to one of proactive resilience.

1. Define Deepfake Threat Scenarios: Leadership and security teams must collaborate with business units to identify the most plausible and damaging attack scenarios. Is the greatest risk an impersonated CEO demanding a wire transfer, a fake vendor submitting a doctored invoice, or a social engineering attack on an IT administrator?

2. Assess Organizational Controls and Risk Factors: Once scenarios are defined, organizations must conduct a frank assessment of their existing defenses. This involves evaluating everything from financial transaction protocols and identity verification processes to the current level of employee awareness and training.

3. Prioritize High-Risk Scenarios: Not all risks are created equal. By analyzing the likelihood, potential impact, and complexity of each scenario, leaders can allocate resources effectively, focusing on mitigating the threats that pose the greatest danger to the organization.

4. Improve Through People, Process, and Technology: This is the implementation phase. It involves a coordinated effort across IT, HR, and security teams to strengthen defenses. This includes training employees to recognize the signs of a deepfake attack, embedding multi-factor verification protocols into workflows (such as a mandatory callback to a trusted phone number for any unusual financial request), and finally, implementing supporting detection and authentication technologies where appropriate.

This structured approach shifts the focus from a futile attempt to find a single technological fix to building a resilient organization. It champions a "zero trust" mindset that extends beyond networks and into human communication itself. In an era of AI-driven deception, embedding verification and a healthy skepticism into corporate culture is not an inconvenience—it is a critical component of survival. Ultimately, the most robust defense against attacks on trust is to build a culture where verification is an instinct, not an afterthought.