Switch Unveils Autonomous AI Factories with NVIDIA Digital Twin Tech

- AI-optimized server racks now demand over 100 kilowatts (kW) of power and cooling, a tenfold increase from traditional racks. - NVIDIA's GB200 NVL72 system requires 140 kW of cooling capacity alone. - Switch's LDC EVO system enables autonomous management of gigawatt-scale AI factories.

Experts agree that autonomous AI factories, powered by digital twin technology, are essential for managing the extreme power and cooling demands of next-generation AI infrastructure, marking a pivotal shift from human-managed to fully automated data centers.

Switch Unveils Autonomous AI Factories with NVIDIA Digital Twin Tech

LAS VEGAS, NV – March 16, 2026 – Data center operator Switch today announced a significant leap forward in AI infrastructure, revealing the integration of NVIDIA's Omniverse DSX Blueprint into its new LDC EVO™ operating system. The move signals a pivotal shift from human-managed facilities to fully automated, intelligent "AI Factories" capable of managing the unprecedented demands of next-generation artificial intelligence.

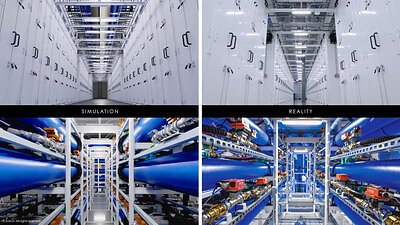

At the heart of the announcement is the creation of a live, physics-accurate digital twin for Switch's entire EVO AI Factory™ portfolio. This virtual replica, powered by NVIDIA's simulation technology and the OpenUSD standard, allows the data center to monitor, model, and automate its own complex operations in near real-time, effectively creating a self-driving environment for high-performance computing.

Beyond Human Management: The Rise of the Digital Twin

For decades, data centers have relied on Data Center Infrastructure Management (DCIM) software—a system where human operators, aided by monitoring tools, make critical decisions about power, cooling, and capacity. However, the explosive growth of AI has rendered this model obsolete for cutting-edge deployments. Modern AI workloads operate at what the industry calls "extreme density," creating an operational complexity that far exceeds human management capabilities.

This density is no longer a theoretical challenge. AI-optimized server racks now routinely demand over 100 kilowatts (kW) of power and cooling, a tenfold increase from traditional racks. GPUs like NVIDIA's upcoming B200 are expected to draw over 1,000 watts per chip, concentrating immense heat in small spaces. The NVIDIA GB200 NVL72 system, which packs 72 GPUs into a single liquid-cooled rack, requires a staggering 140 kW of cooling capacity on its own.

Switch's LDC EVO, powered by the NVIDIA blueprint, is designed to replace the legacy DCIM model entirely. Instead of presenting static data for human review, it creates a dynamic, 3D digital twin of the entire AI factory. This allows Switch and its customers to simulate and validate hardware configurations—such as deployments of NVIDIA Grace Blackwell on Dell PowerEdge servers—before a single physical server is installed. This "simulate-then-deploy" approach drastically reduces deployment times, mitigates risks, and ensures that the physical facility performs exactly as designed from day one.

Orchestrating the Gigawatt-Scale AI Revolution

The goal is to power the next phase of the AI revolution, which experts predict will be built within "gigawatt-scale AI factories." This new class of data center requires a fundamental rethinking of infrastructure, from the power grid to the individual chip.

"LDC EVO is the operating system for Switch's EVO AI Factory, orchestrating the modular and configurable campus architecture that enables hybrid cooling and supports extreme AI densities," said Zia Syed, Chief Technology Officer of Switch. "It's built to operate every generation of NVIDIA reference design, including the Rubin DSX architecture. Leveraging NVIDIA Omniverse libraries and OpenUSD for digital twins, we've layered in automation workflows and operational intelligence to unify deployments."

This orchestration is critical for managing the immense power and cooling densities in real time. The system can autonomously adjust to thermal fluctuations, power spikes, and changing workloads to maximize performance and efficiency.

"Gigawatt-scale AI factories require a shift toward autonomous, telemetry-driven infrastructure capable of orchestrating extreme power and cooling densities in real time," explained Vladimir Troy, Vice President of AI Infrastructure at NVIDIA. "The integration of the NVIDIA Omniverse DSX blueprint into the Switch LDC EVO operating system provides the high-fidelity simulation and operational intelligence necessary to optimize the deployment of next-generation NVIDIA AI infrastructure."

An Ecosystem Approach to Next-Generation Infrastructure

Building an autonomous AI factory is not a solo endeavor. Switch emphasized that the LDC EVO platform is the result of a deep collaboration with a broad ecosystem of technology leaders. The project brings together NVIDIA's simulation and AI expertise with a host of industry specialists, including Dell Technologies, Schneider Electric, Dassault Systèmes, Cadence, and Oxide Computer Company.

Within the LDC EVO environment, these disparate technologies function as a unified whole. Schneider Electric's ETAP platform provides electrical simulation, Cadence contributes thermal modeling, and Dassault Systèmes offers reality capture. This integration allows teams to view and control every aspect of the facility—from electrical grids and cooling loops to construction lifecycle management—through a single, cohesive interface.

This collaborative model reflects a larger industry trend. NVIDIA's DSX blueprint is being adopted by numerous partners to create an open standard for AI data center design and operation. By building on this shared framework, Switch is ensuring its facilities are not only state-of-the-art today but also compatible with future innovations from across the technology landscape.

As the demand for AI compute continues its exponential growth, the race to build its foundations is intensifying. While competitors like CyrusOne and Equinix are also heavily investing in high-density and liquid-cooling solutions, Switch is betting that true automation is the ultimate differentiator. By creating a data center that can think for itself, the company aims to provide a level of operational efficiency, reliability, and scalability that was previously unimaginable. This advanced capability will be demonstrated live at the upcoming NVIDIA GTC 2026 conference, where attendees will get a firsthand look at the AI factory of the future.