SoftBank Targets AI Cloud Dominance with New Infrinia OS

- 150-megawatt AI data center planned by 2026

- Infrinia OS designed to simplify GPU cloud management, reducing operational complexity

- Hybrid strategy combining hyperscaler and AI-native cloud approaches

Experts view SoftBank's Infrinia AI Cloud OS as a strategic pivot that positions the company as a core provider of AI infrastructure, potentially disrupting the market by lowering barriers to entry for GPU cloud services.

SoftBank Targets AI Cloud Dominance with New Infrinia OS

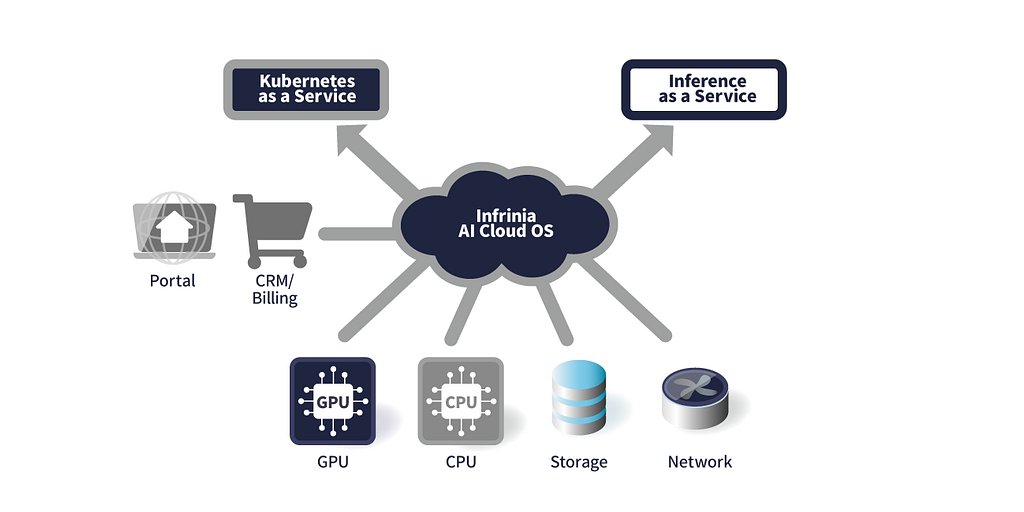

TOKYO, JAPAN – January 21, 2026 – In a decisive move that signals a fundamental shift in its corporate identity, SoftBank Corp. has officially entered the fiercely competitive AI infrastructure market with the announcement of “Infrinia AI Cloud OS.” Developed by its U.S.-based Infrinia Team, the new software stack is designed to be the central nervous system for next-generation AI data centers, aiming to dramatically simplify the deployment and management of the powerful GPU resources that fuel the AI revolution.

The launch represents a significant escalation of SoftBank's ambitions, positioning the telecommunications giant not just as a user or investor in AI, but as a core provider of the foundational technology required to build and scale AI services globally. The Infrinia OS promises to reduce the immense cost and operational complexity faced by data center operators, potentially democratizing access to high-performance computing and accelerating the entire AI lifecycle, from training to inference.

Beyond Carrier: SoftBank's Strategic Pivot to AI Infrastructure

The introduction of Infrinia AI Cloud OS is the most tangible manifestation yet of SoftBank's long-stated “Beyond Carrier” growth strategy. For years, the company has been telegraphing its intent to expand beyond its traditional telecommunications business, and it is now clear that AI infrastructure is the centerpiece of that vision. The company is wagering billions on the belief that the future of the information revolution lies in building the platforms that will enable Artificial General Intelligence (AGI).

This strategy is backed by a torrent of investment across the AI ecosystem. SoftBank is aggressively building out its own AI data centers, with plans for a 150-megawatt facility by 2026, and is developing homegrown Large Language Models (LLMs) specialized for the Japanese language. This software launch complements these hardware and model initiatives, creating a vertically integrated AI stack.

In a statement accompanying the announcement, Junichi Miyakawa, President & CEO of SoftBank Corp., framed the initiative in sweeping terms. "At the core of this initiative is our in-house developed ‘Infrinia AI Cloud OS,’ a GPU cloud platform software designed for next-generation AI infrastructure that seamlessly connects AI data centers, enterprises, service providers and developers," he stated. "The advancement of AI infrastructure requires not only physical components such as GPU servers and storage, but also software that integrates these resources and enables them to be delivered flexibly and at scale."

Miyakawa's comments underscore that Infrinia is not merely a new product but the linchpin of a new software business designed to make SoftBank a central player in the global AI economy.

Simplifying the AI Stack: The Technology Behind Infrinia

The core challenge Infrinia aims to solve is the staggering complexity of running AI workloads at scale. Building and operating a GPU cloud service that can efficiently serve multiple tenants requires highly specialized expertise, often involving bespoke, brittle, and expensive in-house solutions. Infrinia proposes to replace this with a standardized, automated operating system.

Its key features are designed to abstract away the most difficult parts of GPU infrastructure management. The OS provides a fully automated Kubernetes as a Service (KaaS), handling everything from low-level BIOS and RAID settings on servers to the OS, GPU drivers, networking, and storage. This is particularly crucial for state-of-the-art platforms like the NVIDIA GB200 NVL72, allowing operators to manage vast pools of GPUs without a massive team of engineers.

One of its most significant technical differentiators is its ability to dynamically reconfigure physical resources. Using software-defined controls, Infrinia can adjust high-speed NVIDIA NVLink connections and memory allocation on the fly as customers create or delete their clusters. This allows for the creation of optimally configured compute environments tailored precisely to the needs of a specific AI model, maximizing performance and efficiency.

Furthermore, the platform offers an Inference as a Service (Inf-aaS) component with OpenAI-compatible APIs. This allows developers to deploy large language models for inference tasks without ever needing to interact with Kubernetes or the underlying hardware, a feature that drastically lowers the barrier to entry for building and scaling AI applications. Combined with secure multi-tenancy and automated system maintenance, the OS presents a compelling package for any organization looking to offer GPU cloud services.

A New Contender in the GPU Cloud Wars

With Infrinia, SoftBank is not just entering a new market; it is challenging an established and formidable field of competitors. The AI cloud landscape is currently dominated by hyperscalers like Amazon Web Services (AWS), Microsoft Azure, and Google Cloud, which offer extensive GPU capacity within their vast service ecosystems. At the same time, a new class of specialized, AI-native cloud providers such as CoreWeave and Lambda Labs have gained significant traction by offering bare-metal performance and services tailored specifically for AI developers.

SoftBank's strategy appears to be a hybrid of these approaches. It is not simply renting out GPUs; it is providing the essential software layer that enables others to become efficient GPU cloud providers. This positions Infrinia as an enabling technology that could power a new wave of cloud services, or even be adopted by existing players seeking to reduce their own operational overhead.

“This move elevates SoftBank from a pure infrastructure operator to an AI-native platform-level competitor,” noted one industry analyst. “The market is rapidly moving away from just offering raw compute power. The real battle is in providing a full-stack, automated platform that makes AI development faster and cheaper. By focusing on the OS layer, SoftBank is targeting a critical and underserved part of the value chain.”

By offering a solution designed to reduce Total Cost of Ownership (TCO) and operational burden, Infrinia could disrupt the market by empowering a broader range of companies to compete with the hyperscalers on both performance and price.

From Internal Rollout to Global Ambition

SoftBank's go-to-market plan for Infrinia is both cautious and ambitious. The initial deployment will be within SoftBank's own burgeoning GPU cloud services, allowing the company to refine the product in a real-world, large-scale environment. However, the company has made its global intentions clear, with the Infrinia Team aiming to expand deployment to overseas data centers and cloud environments.

This global vision is supported by SoftBank's colossal investments in digital infrastructure worldwide. The company is a key partner in the audacious “Stargate” initiative, a reported multi-hundred-billion-dollar project with OpenAI and Oracle to construct a series of massive AI data centers across the U.S. and other regions. Its recent acquisition of DigitalBridge further provides it with the assets and expertise to scale data centers, connectivity, and edge networks globally.

Infrinia AI Cloud OS appears to be the software intelligence that will orchestrate these vast physical assets. While the path to global adoption will be challenging, requiring SoftBank to prove its technology against entrenched incumbents, the company is leveraging its deep pockets and strategic partnerships to carve out a foundational role in the next era of computing. The launch of Infrinia is a clear declaration that SoftBank is no longer content to be just a carrier of information; it intends to be an architect of the intelligence it carries.