Panmnesia's CXL 3.2 Chip to Redefine AI Data Center Architecture

- $588 billion: Projected global composable infrastructure market size by 2034, up from $14 billion in 2025 (CAGR >50%).

- CXL 3.2: Panmnesia claims its chip is the world's first to fully implement this specification, including Port-Based Routing (PBR).

- Double-digit nanosecond latency: Achieved by Panmnesia's proprietary controller, critical for AI performance.

Experts would likely conclude that Panmnesia's CXL 3.2 chip represents a significant technological leap in AI data center architecture, offering superior flexibility and efficiency through full CXL 3.2 implementation and Port-Based Routing, potentially reshaping the industry's approach to large-scale AI infrastructure.

Panmnesia's CXL 3.2 Chip to Redefine AI Data Center Architecture

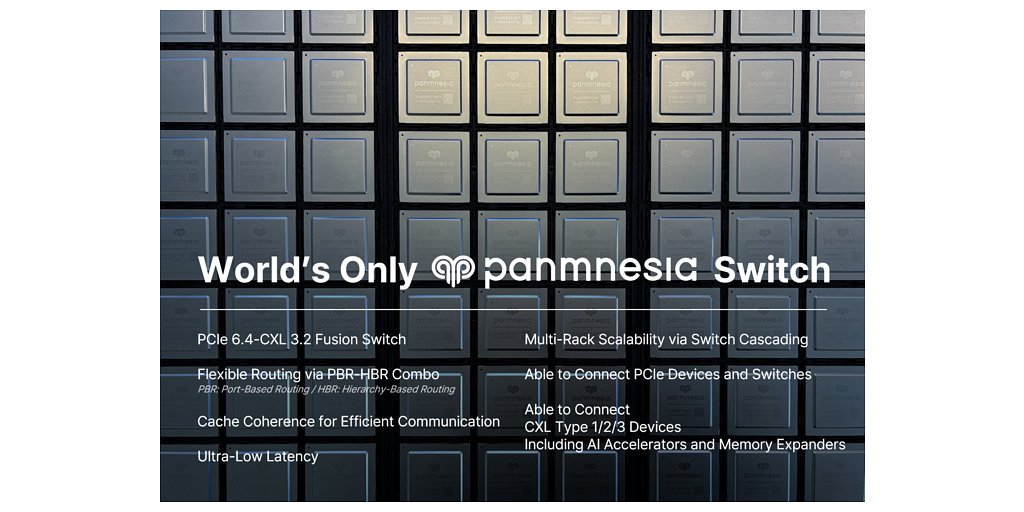

DAEJEON, South Korea – April 15, 2026 – In a move poised to reshape the architecture of artificial intelligence infrastructure, South Korean fabless company Panmnesia has announced it will begin mass production of its PCIe 6.4-CXL 3.2 fusion fabric switch chip in the second half of this year. The company asserts this is the world's only silicon to fully implement the CXL 3.2 specification, including the highly flexible Port-Based Routing (PBR) protocol, a feature that could provide a significant leap in data center efficiency and performance.

The announcement places the relatively young company, which secured approximately $60 million in Series A funding in 2024, at the forefront of the race to solve the critical interconnect bottlenecks plaguing large-scale AI and high-performance computing (HPC) environments. As AI models grow exponentially in size, the ability to move data quickly and efficiently between processors, accelerators, and memory has become a paramount challenge.

The Dawn of Rack-Scale Composable Systems

The explosion in generative AI has pushed current data center designs to their limits. The traditional approach of tightly coupling CPU, memory, and accelerators like GPUs within a single server box is proving inefficient and costly. This leads to stranded resources—expensive GPUs sitting idle while waiting for data, or memory pools being underutilized. Panmnesia's switch chip directly targets this problem by enabling a composable disaggregated infrastructure at the rack scale.

By supporting both the ubiquitous PCIe protocol and the emerging CXL standard on a single die, the fusion switch acts as a universal translator and traffic director. It can connect a diverse pool of resources—including PCIe-based GPUs, CXL-enabled CPUs, memory expanders, and AI accelerators—into a single, unified fabric. This allows system administrators to dynamically assemble and reconfigure systems on the fly, allocating the precise amount of compute, memory, and acceleration needed for a specific task, such as training a large language model (LLM) or running a complex scientific simulation.

The market for such flexibility is expanding at a breakneck pace. Projections show the global composable infrastructure market growing from just over $14 billion in 2025 to a staggering $588 billion by 2034, representing a compound annual growth rate (CAGR) of over 50%. Panmnesia is betting that its advanced switch is the key to unlocking this potential, promising significant reductions in both capital expenditure (CAPEX) and operating expenditure (OPEX) for data center operators.

A New Route to Performance: The Power of PBR

At the heart of Panmnesia's technological claim is its unique support for Port-Based Routing (PBR), a key feature of the CXL 3.2 specification. Most existing interconnects rely on hierarchy-based routing (HBR), which forces connections into a rigid, tree-like structure with the CPU at its root. This creates longer, often convoluted data paths and potential performance bottlenecks.

PBR shatters this limitation. It allows devices and switches to be interconnected in virtually any topology—such as a mesh, torus, or dragonfly—that best suits the workload. This enables the design of significantly shorter and more direct data paths between components. For instance, two GPUs can exchange data directly with minimal CPU involvement, a capability Panmnesia calls Direct Peer-to-Peer communication. This dramatically reduces latency and frees up the CPU for other critical tasks.

This routing flexibility is powered by a fully proprietary controller designed by Panmnesia. The company reports the controller achieves double-digit nanosecond latency, a critical factor for performance in latency-sensitive AI applications. Furthermore, because the intellectual property is owned in-house, the controller logic can be modified to meet specific customer needs, opening the door for tailored, custom solutions.

A Crowded Field with a Potential Frontrunner

Panmnesia is entering a competitive but rapidly evolving market. Established giants and agile startups alike are vying for a piece of the CXL interconnect pie. Marvell, a major player, recently strengthened its position by acquiring XConn Technologies and has announced its own CXL 3.0 switch. Other companies like Liqid, Rambus, and Elastics.cloud are also developing CXL solutions, primarily focusing on CXL 2.0, CXL 3.1, or providing controller IP rather than a complete switch chip.

However, Panmnesia's claim of having the world's only silicon fully implementing the CXL 3.2 specification with PBR appears to give it a distinct, time-sensitive advantage. While competitors are focused on earlier versions of the standard, Panmnesia's solution, first sampled in late 2025 and showcased at CES 2026, is built for the next wave of AI infrastructure. This distinction is not merely academic; the advanced fabric management, peer-to-peer communication, and flexible topologies enabled by CXL 3.2 and PBR are precisely what the industry needs to overcome the memory wall and scale future AI workloads effectively.

From Silicon to Systems: The Road to Adoption

The journey from a press release to widespread market adoption is long, but Panmnesia has already laid the groundwork. The company is not just showing diagrams; it is offering samples of the silicon and complete pilot systems to early access partners for validation. A development board, the PANRDK, is also available, allowing partners to begin testing and integrating the technology into their own environments.

With mass production scheduled for the latter half of 2026, the industry will be watching closely. The successful deployment of Panmnesia's CXL 3.2 fusion switch could catalyze a fundamental shift in how data centers are designed and operated. If the promised gains in performance, efficiency, and cost savings materialize at scale, it will not only validate the company's bold vision but also accelerate the entire industry's transition to the truly dynamic and composable systems required for the age of AI.

📝 This article is still being updated

Are you a relevant expert who could contribute your opinion or insights to this article? We'd love to hear from you. We will give you full credit for your contribution.

Contribute Your Expertise →