OpenObserve Launches AI Agent to Automate IT, Challenging Giants

- 140x lower storage costs compared to indexing-heavy systems like Elasticsearch

- 90% of incident response grunt work automated by AI SRE agent

- 18,000+ GitHub stars and adoption by 6,000+ organizations for OpenObserve's open-source platform

Industry analysts agree that OpenObserve's unified observability platform and AI-driven SRE model address critical gaps in modern IT operations, shifting from reactive troubleshooting to proactive, autonomous systems.

OpenObserve Launches AI Agent to Automate IT, Challenging Giants

MENLO PARK, Calif. – April 29, 2026 – OpenObserve today unveiled Observability 3.0, an AI-native platform featuring an autonomous AI Site Reliability Engineering (SRE) agent designed to automate incident response and unify the monitoring of infrastructure, applications, and large language models (LLMs). The launch positions the company as a direct challenger to legacy observability stacks and established commercial vendors, aiming to shift engineering teams from reactive troubleshooting to proactive, autonomous operations.

The new platform is built to handle the immense scale and complexity of modern AI workloads, a challenge that has strained older systems like Prometheus-Grafana and the ELK stack. At the heart of the release is the AI SRE, an autonomous layer that leverages unified telemetry to identify root causes of system issues and can recommend or independently execute corrective actions, drastically reducing the need for manual human intervention.

The Dawn of the Autonomous SRE

The introduction of an autonomous AI SRE agent marks a significant step toward the future of IT operations. In modern, complex systems, an incident can generate a tidal wave of data—logs, metrics, and traces—that is impossible for human teams to sift through manually in real-time. OpenObserve's AI SRE agent is designed to perform what it calls "90% of the grunt work" involved in incident response.

When an alert is triggered, the AI SRE, an enterprise-only feature, immediately begins analyzing the incident. It connects to the OpenObserve platform and the underlying infrastructure using a proprietary protocol, allowing it to correlate signals across disparate sources like Kubernetes clusters, cloud environments, and application traces. The goal is to deliver a structured root cause analysis to engineers before they have even started their manual investigation, dramatically reducing downtime and business impact.

This system is complemented by proactive anomaly detection, which provides early warnings of potential issues before they escalate into full-blown outages. By receiving alerts within their existing workflow, teams can address problems before they affect users.

“Observability 3.0 is a new operating model for engineering teams, and the companies that adopt it will ship faster, sleep better, and outpace those still wiring together legacy tools,” said Prabhat Sharma, founder and CEO of OpenObserve. “With Observability 3.0, companies can move from firefighting to proactive autonomous operations and get back to building the products that drive their businesses forward.”

Challenging the Observability Establishment

OpenObserve is taking direct aim at what it calls the friction of legacy observability. As telemetry volumes grow an estimated 30% year-over-year, many established commercial vendors have responded by encouraging customers to adopt "data diets"—deliberately limiting the data they ingest or store to control skyrocketing costs. This practice, however, often removes the very context engineers need to diagnose complex problems.

OpenObserve's counter-proposal is a platform that eliminates the need for such compromises. The company claims its S3-native architecture, which uses Apache Parquet for columnar storage on inexpensive object storage, delivers up to 140 times lower storage costs compared to indexing-heavy systems like Elasticsearch. This design avoids the high costs of block storage and the performance bottlenecks of massive indexes, allowing organizations to store and query vast amounts of telemetry data cost-effectively.

This architectural choice enables a pricing model based on data volume rather than per-host or per-container fees, which can penalize organizations for scaling their infrastructure. The result is a platform that encourages comprehensive data collection, not restriction.

This market shift is validated by industry analysts. "Vendors responding with ‘data diets’ are solving the wrong problem; engineering teams need more contextual, correlated telemetry, not less, to diagnose issues in AI-driven environments," noted Paul Nashawaty, Principal Analyst at theCUBE Research. "This is driving a shift to unified observability, where a shared telemetry layer replaces stitched-together tools. Ultimately, it’s leading to autonomous operation, AI-driven SRE models such as OpenObserve's where systems surface root cause and act, instead of teams manually triaging incidents.”

Solving the LLM Observability Puzzle

A critical component of the new platform is its dedicated LLM Observability suite. As enterprises rush to deploy generative AI applications, they are discovering a new set of monitoring challenges. LLMs are non-deterministic, prone to "hallucinations," and their inner workings are often a black box, making them difficult to debug with traditional tools.

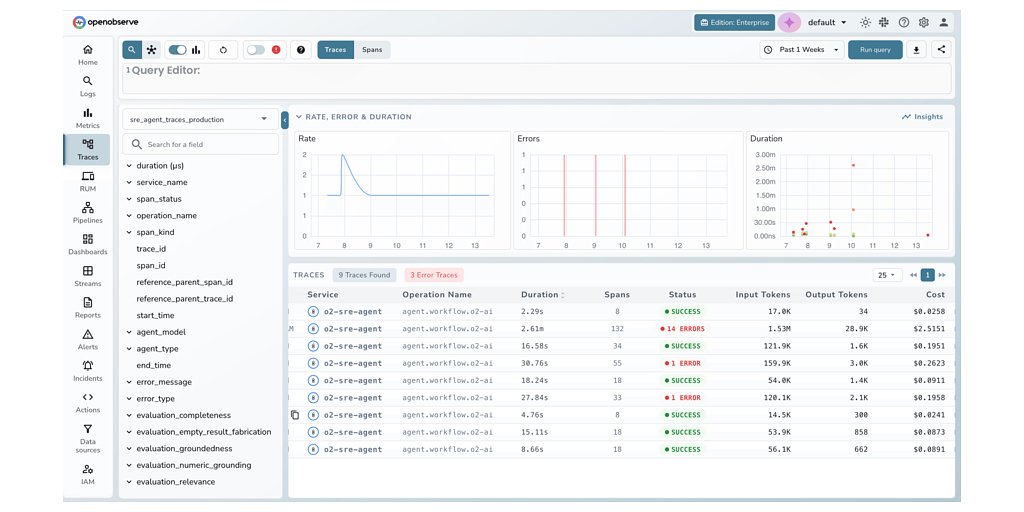

Specialized startups have emerged to tackle this problem, but they often create yet another data silo. OpenObserve’s strategy is to integrate LLM observability directly into its unified platform. The new features provide a single interface to monitor everything from server-level metrics to application performance and specific AI behaviors. This includes end-to-end tracing of LLM agent actions, detailed prompt monitoring, evaluation tracking to measure output quality, and granular control over token consumption and cost.

By unifying this AI-specific telemetry with traditional infrastructure and application data, developers and MLOps teams can gain a holistic view. For example, they can correlate a spike in LLM response latency with a bottleneck in the underlying Kubernetes cluster or trace a poor user experience back to a problematic prompt, all within the same system. This integrated approach is crucial for building and maintaining reliable, cost-effective, and safe generative AI applications in production environments.

Open Source Roots and Commercial Growth

Despite its enterprise ambitions, OpenObserve maintains a strong foundation in the open-source community. The core platform is open source and has garnered significant developer traction, with over 18,000 stars on GitHub and adoption by more than 6,000 organizations, including several Fortune 100 companies. The company has committed to keeping its open-source version feature-complete and production-ready, a strategy designed to build trust and a broad user base.

The new AI SRE agent represents the core of its commercial offering, following a classic open-core model. This strategy is backed by a recent $10 million Series A funding round led by Nexus Venture Partners and Dell Technologies Capital, which is fueling the company's commercial expansion.

Furthermore, the platform's 100% native support for OpenTelemetry, the emerging industry standard for instrumentation, reinforces its commitment to an open ecosystem. This allows organizations to avoid vendor lock-in and easily integrate OpenObserve into their existing toolchains, a key consideration for modern engineering teams looking for both power and flexibility.

📝 This article is still being updated

Are you a relevant expert who could contribute your opinion or insights to this article? We'd love to hear from you. We will give you full credit for your contribution.

Contribute Your Expertise →