Nudge Security Tackles Shadow AI in the Enterprise Wild West

- 80% of organizations report having already encountered risks from agentic AI, including improper data exposure and unauthorized access to critical systems.

- 71% of workers have used unapproved consumer AI tools on the job (Microsoft-commissioned study).

- Security breaches at organizations with high levels of shadow AI cost an average of $670,000 more to remediate (IBM report).

Experts agree that while AI agents offer significant productivity gains, their unmanaged use poses substantial security and compliance risks, necessitating proactive governance to balance innovation with control.

Taming the AI Wild West: Firms Race to Govern Shadow Agents

AUSTIN, Texas – March 24, 2026 – As employees increasingly deploy autonomous AI "agents" to streamline their work, a new and chaotic digital frontier has emerged within corporations, fraught with unseen security risks. In response to this growing "shadow AI" problem, cybersecurity firm Nudge Security today announced new capabilities designed to give organizations the visibility and control needed to tame this wild west of unmanaged artificial intelligence.

The announcement comes as enterprises grapple with a dual mandate: harness the power of AI for a competitive edge while simultaneously protecting against the significant threats it introduces. Agentic AI—systems that can act autonomously to perform tasks—is the fastest-growing enterprise technology priority. Yet, it's also a top security concern for nearly half of all security professionals, and for good reason. A staggering 80% of organizations report having already encountered risks from agentic AI, including improper data exposure and unauthorized access to critical systems.

The Rise of the Shadow AI Workforce

The core of the issue lies in the rapid, decentralized adoption of AI tools by employees. Much like the "shadow IT" of the past decade, a new wave of "shadow AI" is now proliferating. Research indicates that 78% of employees bring their own tools to work, and recent studies show this trend is supercharged by AI. One Microsoft-commissioned study found that 71% of workers have used unapproved consumer AI tools on the job.

Unlike simple applications, AI agents are often designed to integrate deeply with corporate systems, requiring highly permissive access to data and other software to function. An employee might create a custom agent using a low-code platform like Microsoft Copilot Studio or n8n to automate report generation. In doing so, they might unknowingly grant it broad access to sensitive sales data in Salesforce, customer information in ServiceNow, and proprietary documents in a shared drive.

This creates a minefield of potential security and compliance failures. Industry analysts warn that when employees paste confidential data into unvetted AI tools, that information can be logged, processed, and even used for retraining models, placing it permanently outside the organization's control. The financial stakes are high; according to a recent IBM report, security breaches at organizations with high levels of shadow AI cost an average of $670,000 more to remediate.

From Discovery to Governance at the Workforce Edge

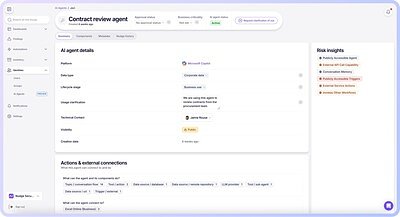

Nudge Security aims to address this challenge by extending its security philosophy from SaaS applications to the new agentic layer. The company’s new AI agent discovery capabilities are designed to continuously find, assess, and govern agents at their source of creation. The platform can identify agents built on platforms like Microsoft Copilot Studio, Salesforce Agentforce, and n8n, among others.

Once an agent is discovered, the system inventories its permissions, the resources it can access, and who created it. It then surfaces critical risks, such as publicly accessible agents that could be exploited by outsiders, hardcoded credentials that bypass security protocols, or "orphaned" agents that continue to run without a clear owner.

This isn't a new product direction for the company, but rather an evolution of its core mission to secure the "Workforce Edge"—the point where employees make thousands of daily decisions about technology. Unlike point solutions built specifically for AI, Nudge Security leverages its existing, deep visibility into how employees use technology across the enterprise. For existing customers, these new capabilities can be enabled without any new deployments.

"The security teams that build a real inventory of their AI agents now, with actual risk visibility and clear accountability, will put their organizations in a fundamentally advantaged position," said Russ Spitler, CEO and co-founder of Nudge Security, in the announcement. "Our AI agent discovery lets teams embrace AI innovation while also addressing the new risks these agents introduce."

Navigating a New Regulatory and Security Paradigm

The uncontrolled spread of AI agents introduces profound challenges for legal and compliance teams navigating a complex web of global regulations. Laws like Europe's General Data Protection Regulation (GDPR) and the landmark EU AI Act have extraterritorial reach, meaning they apply whenever EU residents' data is processed, regardless of where a company is headquartered.

Under the EU AI Act, which began its phased rollout in 2024, AI systems are classified by risk. Agents that make significant decisions—such as in hiring or credit scoring—are deemed "high-risk" and subject to stringent requirements for documentation, auditing, and human oversight. Failure to comply can result in fines of up to €35 million or 7% of a company's global annual turnover. Without a complete inventory of every AI system in use, proving compliance becomes nearly impossible.

The autonomous nature of AI agents complicates adherence to foundational data privacy principles like data minimization and purpose limitation. An agent might collect more data than is strictly necessary or repurpose it for new tasks, creating a compliance breach without any direct human action. Solutions that can automatically discover these agents and document their capabilities are becoming essential tools for modern governance.

Balancing Innovation with Control

While the risks are substantial, most organizations recognize that banning AI is not a viable strategy. The productivity gains are too significant to ignore. The challenge, therefore, is to enable innovation safely. This is where a new approach to security governance is emerging, one focused on collaboration and empowerment rather than outright restriction.

Nudge Security's approach embodies this shift by not only identifying risk but also by engaging the human creator directly. Using automated, policy-driven "nudges," the platform can contact an agent's owner in real-time to ask them to justify its purpose, confirm its data access needs, and remediate identified security flaws.

This behavioral science-based model aims to make security a natural part of the innovation process. It transforms security teams from gatekeepers into partners, providing the guardrails that allow employees to experiment and build with confidence. By fostering a culture of shared responsibility, organizations can unlock the transformative potential of agentic AI while maintaining the security and compliance posture necessary to operate in a modern digital landscape.