The Post-Quantum Convergence: Why Enterprise Firewalls are Fracturing Under Next-Gen Cryptography

- $2.0 trillion to $3.3 trillion: Potential GDP at risk from a single-day quantum-enabled cyberattack on a top-five U.S. bank (Citi Institute, February 2026).

- 14.7 KB: Size of a post-quantum TLS handshake, exceeding traditional TCP congestion window limits.

- 7,000+ publicly reachable servers: Exposed due to insecure MCP credential handling (OX Security, April 2026).

Experts agree that the transition to post-quantum cryptography is no longer theoretical but an immediate operational crisis, requiring urgent triage of legacy systems and empirical validation of firewall architectures to handle the dual burdens of PQC and GenAI workloads.

The Post-Quantum Convergence: Why Enterprise Firewalls are Fracturing Under Next-Gen Cryptography

Disclosure: SecureIQLab provided this article's source materials under embargo. Cameron Camp's comments were provided via written Q&A.

For years, the corporate transition to a quantum-safe cryptographic framework has been treated primarily as a theoretical exercise, confined to academic physics symposiums, speculative threat intelligence, and long-term risk assessments. Today, however, that transition has rapidly and irreversibly devolved into an immediate, high-friction network engineering crisis.

Corporate boards, Chief Information Security Officers (CISOs), and network architects find themselves trapped in a precarious convergence: the catastrophic operational overhead of deploying National Institute of Standards and Technology (NIST) Post-Quantum Cryptography (PQC) standards, the explosive integration of highly vulnerable Generative AI (GenAI) workloads, and a rapidly tightening vise of international regulatory mandates.

The Macroeconomic Threat and "Harvest Now, Decrypt Later"

The macroeconomic exposure of this transition is profound, shifting the quantum threat from a future-state IT operations issue to a present-day systemic financial risk. In a landmark February 2026 report titled "Quantum Threat: The Trillion-Dollar Security Race Is On," the Citi Institute modeled the impact of a specific single-day, quantum-enabled cyberattack scenario targeting Fedwire access at a top-five United States bank. Under that modeled scenario, the resulting macroeconomic fallout was calculated at an astonishing $2.0 trillion to $3.3 trillion in gross domestic product (GDP) at risk.

While the exact arrival date of a functional quantum computer capable of breaking RSA-2048 encryption remains heavily debated, the urgency of enterprise migration is definitively decoupled from that horizon. Driven by "Harvest Now, Decrypt Later" cyberespionage campaigns, state-sponsored adversaries and advanced persistent threat (APT) groups are actively intercepting and archiving vast troves of encrypted global network traffic. Attackers are fully aware they cannot decipher the data today, but are warehousing it until quantum processing matures.

Addressing the strategic dilemma this creates for enterprises, Cameron Camp, Senior Security Researcher at SecureIQLab, noted in a written response to BriefGlance that executives must adopt a highly structured triage approach. "CISOs need to balance budget against their existing inventory and understand which legacy systems are the least-equipped to handle ML-DSA integration under high load, and how critical those systems are," Camp wrote. "This is a good time to reflect on the coming threat before it impacts their organization."

The Physics of Failure: Certificate Bloat and TCP Fragmentation

The most immediate and tangible crisis facing enterprise security architecture today is not the theoretical decryption of legacy algorithms, but rather the operational devastation introduced by the post-quantum standards themselves. The implementation of NIST Federal Information Processing Standards (FIPS) 203, 204, and 205 is fundamentally fracturing the physical infrastructure of the internet.

The core crisis lies in the massive certificate bloat required to secure data against quantum algorithms. A post-quantum Transport Layer Security (TLS) handshake containing a full ML-DSA certificate chain exceeds 14.7 Kilobytes, a data payload that physically cannot be transmitted in a single burst over the traditional 14.5 KB TCP congestion window. This forces network-level packet fragmentation.

Legacy network inspection tools, deep packet inspection (DPI) engines, and firewalls are hardcoded with fixed buffer sizes designed exclusively for the small handshakes of classical cryptography. When these limited memory buffers are saturated by fragmented post-quantum certificates, the firewalls routinely register the massive fragments as anomalies or buffer-overflow attacks, resulting in silent packet drops and connection failures.

However, the friction does not manifest universally as a protocol breakdown. In a separate written response clarifying the network-level impact, Camp pushed back on the idea of a systemic crash. "ML-DSA increases certificate and handshake size significantly, but that's not primarily a 'TCP packet fragmentation' or a guaranteed 'latency crisis'," Camp explained. Instead, the failures stem directly from legacy systems - older firewalls, proxies, and TLS inspection devices - that "make assumptions about certificate-chain size, TLS record structure, buffer depth."

The Compounding Risk of Generative AI and MCP

Compounding the immense cryptographic burden placed on modern network infrastructure is the parallel integration of agentic AI systems. The deployment of complex data connection frameworks, most notably Anthropic's Model Context Protocol (MCP), bridges external AI agents directly to internal enterprise systems.

This drastically expands the attack surface, introducing severe supply chain and application vulnerabilities that are actively being exploited in the wild. For example, CVE-2025-34072 exposed a zero-click data exfiltration flaw in an MCP server integration for Slack: AI agents could generate messages containing attacker-crafted hyperlinks which Slack's link-unfurling mechanism would then automatically fetch, silently transmitting data to attacker-controlled URLs without any user interaction. In April 2026, OX Security disclosed systemic insecure default handling affecting Anthropic MCP, exposing over 7,000 publicly reachable servers, while Datadog uncovered over 12,000 API keys and passwords exposed through insecure MCP credential handling.

Consequently, a modern cloud-native firewall must now computationally unpack a heavily fragmented, 14.7 KB post-quantum encrypted data packet, reassemble the TCP state without overflowing its buffers, and simultaneously perform deep semantic context analysis on massive Large Language Model (LLM) prompts to stop prompt-injection and MCP exploitation. These dual enforcement actions must be executed at enterprise line rates without inducing operational latency.

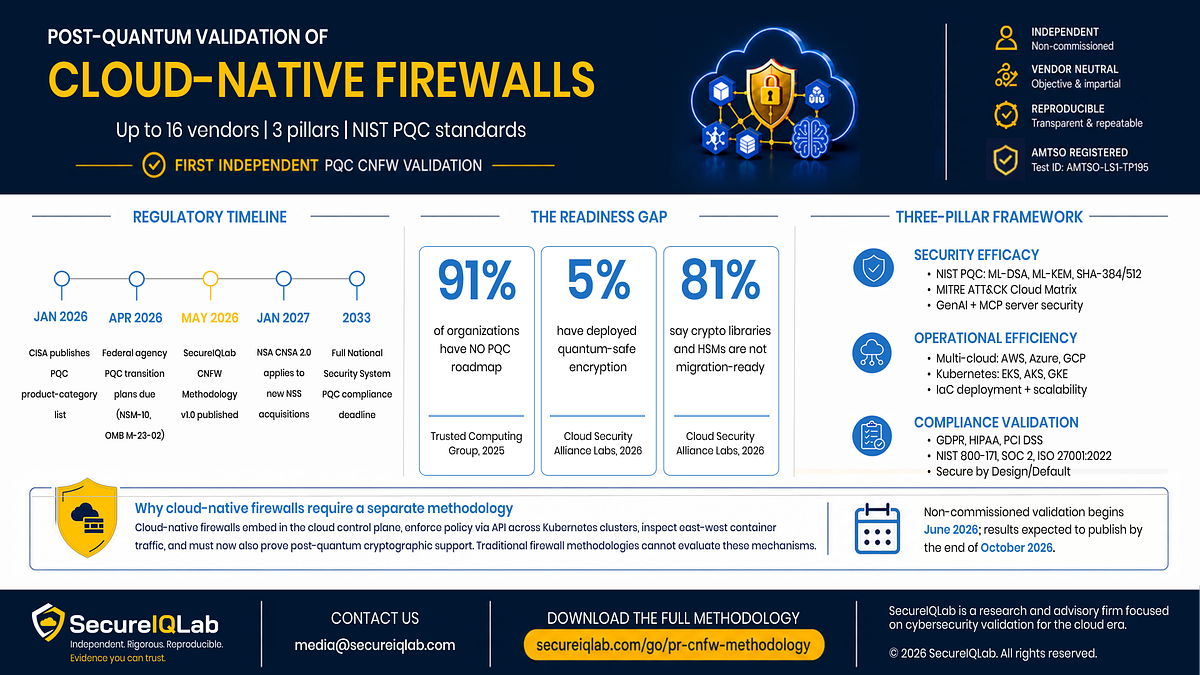

Market Shake-Up, CapEx, and Independent Validation

Global regulatory frameworks are rapidly accelerating this transition. In the United States, the Cybersecurity and Infrastructure Security Agency (CISA) published a January 2026 list of product categories requiring agencies to acquire PQC-enabled technology, while federal agencies faced a critical April 2026 deadline to submit comprehensive PQC transition plans. Internationally, the European Union is actively elevating PQC to a "state-of-the-art" compliance requirement under the Digital Operational Resilience Act (DORA) and the NIS2 Directive.

It is within this volatile landscape that independent, empirical testing has become critical. To benchmark the market, SecureIQLab has released a rigorous validation methodology (AMTSO Test ID AMTSO-LS1-TP195) designed to measure how cloud-native firewalls withstand the dual burdens of PQC standards and GenAI workload security. Testing for a selected cohort of 16 vendors will commence in June 2026, with final comparative reports scheduled for publication in October 2026.

The methodology sets a high bar for transparency. Unlike traditional commissioned tests, it limits vendor opt-outs strictly to products that are either not generally available or definitively outside the methodology's scope. Products that are tested but later withdrawn by the vendor will still be publicly labeled as "Tested, not published," preventing vendors from burying failing results.

While a sudden, universal out-of-band infrastructure refresh cycle is unlikely, Capital Expenditure (CapEx) trends will aggressively shift as results like these enter the public domain. Camp forecasts "targeted refresh pressure in high-throughput, TLS-inspecting environments: banks, SaaS providers, cloud gateways, critical infrastructure, and large east-west data centers."

The narrative surrounding post-quantum cryptography has matured entirely from theoretical physics into a multi-billion-dollar infrastructure imperative. As the market demands empirical data over vendor-driven hype, the hierarchy of global cybersecurity providers is poised for disruption. Reflecting on which firewall architectures are best positioned to benefit from the coming refresh cycle, Camp predicted: "The winners will be those that already handle large TLS handshakes, programmable TLS stacks, and fail-safe behavior."

📝 This article is still being updated

Are you a relevant expert who could contribute your opinion or insights to this article? We'd love to hear from you. We will give you full credit for your contribution.

Contribute Your Expertise →