The AI Red Team Arrives: SpartanX Launches Autonomous Hacking Platform

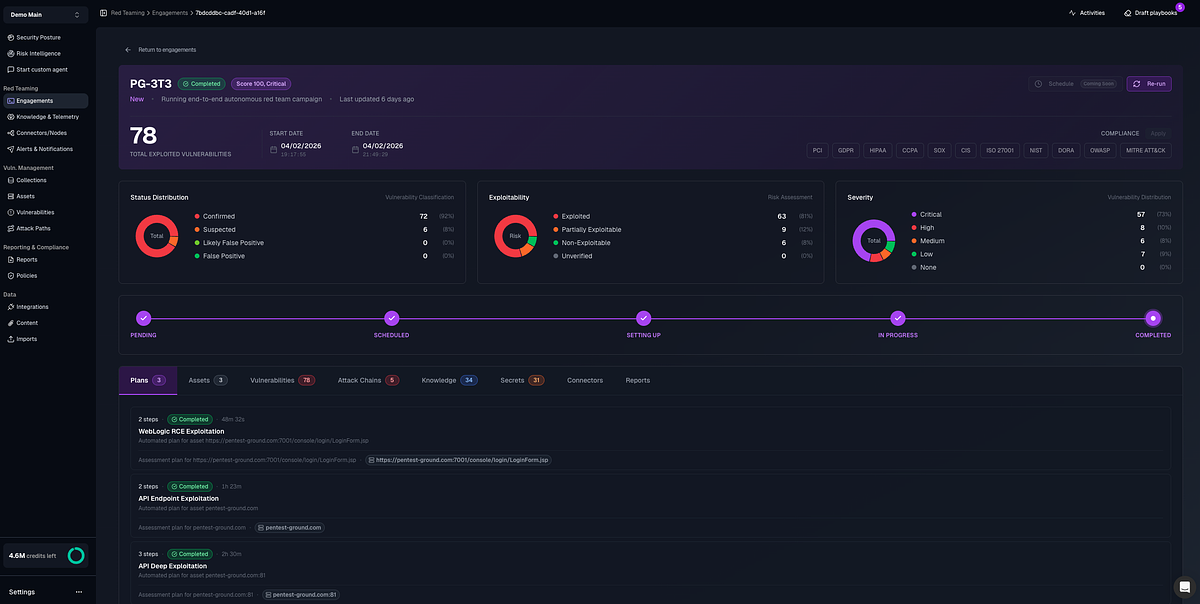

- 500 AI agents: SpartanX's platform deploys a coordinated swarm of over 500 AI agents to continuously find and validate security vulnerabilities.

- 95% noise reduction: The platform claims to eliminate up to 95% of the noise generated by existing vulnerability scanners by exploit-validating every finding.

- 150 integrations: The platform can integrate with over 150 other security tools to re-prioritize and validate findings.

Experts view SpartanX's autonomous red teaming platform as a significant advancement in offensive security, offering continuous, exploit-validated testing at scale, but caution that human oversight and explainable AI remain critical for trust and governance.

SpartanX Unleashes AI Red Team to Automate Offensive Security

BOSTON, MA – April 08, 2026 – In a move poised to reshape the cybersecurity landscape, AI-native security firm SpartanX today launched a fully autonomous red teaming platform, deploying a coordinated swarm of over 500 AI agents to continuously find and validate security vulnerabilities across a company's entire digital infrastructure.

The Boston-based company claims its platform marks the dawn of a new category in offensive security, moving beyond the limitations of both manual penetration testers and first-generation AI tools. For decades, organizations have relied on human experts to simulate attacks—a process widely seen as essential but also slow, expensive, and difficult to scale. SpartanX argues that its AI-driven system can operate with the depth of a human expert but at the speed and scale of a machine, continuously and without human bottlenecks.

"We didn't build a better scanner or a faster pen test. We built an entirely new category of offensive security platform," said Diego Spahn, Co-Founder and CEO of SpartanX, in a statement. "AI agents coordinate across every attack surface, validate every finding with real exploits, and operate continuously without human bottlenecks."

A New Paradigm for Penetration Testing

At the heart of the SpartanX platform is a sophisticated multi-agent system. More than 500 specialized AI agents work in concert to probe for weaknesses across six critical attack surfaces simultaneously: web applications, APIs, source code, networks, cloud infrastructure, and even other AI systems and Large Language Models (LLMs).

This approach stands in stark contrast to traditional methods. Manual red teams, while highly skilled, can only test a snapshot in time. Early AI-powered tools often acted as glorified scanners, relying on single, monolithic models prone to hallucination and unable to chain together exploits across different assets—a common tactic used by real-world adversaries. SpartanX asserts its platform was built to mimic the cross-domain, persistent nature of modern attackers.

While the company touts the platform as "fully autonomous" with "zero humans" needed for execution, industry context suggests a more nuanced reality. Experts in AI security often describe a spectrum of autonomy, and SpartanX's model appears to align with a "human-in-the-loop" approach for strategic control. This means while the AI agents handle the tactical execution of discovering, chaining, and validating exploits, human operators retain ultimate authority, setting the scope of tests, approving critical actions, and managing the overall security strategy. This balance seeks to leverage the speed of AI without abdicating critical human oversight and ethical control.

From Alert Fatigue to Actionable Fixes

One of the most persistent challenges for modern security operations centers (SOCs) is "alert fatigue." Traditional vulnerability scanners are notorious for generating a high volume of findings, many of which are false positives or low-priority risks. Security teams spend an inordinate amount of time sifting through this noise to find the threats that truly matter.

SpartanX aims to solve this problem by ensuring every finding is exploit-validated. The platform doesn't just report a potential vulnerability; it proves it's exploitable by executing a real, but safe, attack. The company claims this process can eliminate up to 95% of the noise generated by existing scanners. For organizations already invested in other tools, the platform can integrate with over 150 of them, ingesting their findings and using its AI to re-prioritize and validate what is actually a tangible risk.

Beyond just finding and validating, the platform extends into remediation. It is designed to automatically generate code-level fixes for identified vulnerabilities. According to the company, its AI agents analyze an organization's existing codebase to understand its patterns and standards, then deliver suggested patches as fully documented pull requests, ready for a developer's review and approval. This creates a seamless workflow from discovery to remediation, while also mapping findings to major compliance frameworks like SOC2, PCI-DSS, and HIPAA to streamline auditing and reporting.

Testing the Untestable: Securing the AI Frontier

The platform's most forward-looking capability may be its native focus on securing AI systems themselves. As businesses rush to integrate LLMs and other AI agents into their products and workflows, they are creating a new and poorly understood attack surface. SpartanX claims its platform is among the first to treat AI security as a first-class capability.

Its agents are specifically trained to test for emerging AI-specific vulnerabilities, including prompt injection, model jailbreaking, guardrail bypasses, agentic workflow manipulation, and sensitive data extraction from models. This specialization addresses a critical gap, as most existing security tools are not equipped to understand or test for these unique threats.

This capability places SpartanX in a burgeoning market alongside other innovators seeking to automate security validation, such as Horizon3.ai and Pentera. However, its aggressive focus on full-stack, exploit-validated autonomy combined with its native AI testing capabilities positions it as a distinct and ambitious player.

The Broader Implications of Autonomous Offense

The launch comes as CISOs and industry leaders grapple with a severe cybersecurity talent shortage and the increasing speed of automated attacks. Autonomous platforms are seen by many as a necessary evolution to augment overburdened human teams.

"We simply can't hire our way out of this problem," noted one chief information security officer at a financial services firm, speaking on the condition of anonymity. "The promise of a system that can continuously test our defenses, eliminate the noise, and free up my team to focus on strategic threats is incredibly compelling."

However, the rise of powerful, autonomous offensive tools is not without its challenges and concerns. Experts caution that while these systems excel at finding known vulnerabilities and executing predefined playbooks, they may still lack the human creativity and contextual awareness needed to uncover complex business logic flaws or novel attack paths. The question of trust, transparency, and governance looms large.

"For any autonomous system, especially one conducting offensive actions, you need absolute clarity on its methodology and robust controls for oversight," an industry analyst commented. "Vendors in this space have a responsibility to build explainable AI, not just a black box." The success of platforms like SpartanX will likely depend not only on their technical efficacy but also on their ability to build trust and demonstrate a safe, controllable, and collaborative relationship between human experts and their new AI counterparts.