Operant AI Launches Guard Against High-Speed AI Agent Attacks

- 6 minutes: Time taken for a malicious LiteLLM library to be automatically installed by an AI-powered developer tool after being uploaded to a public registry.

- 40%: Gartner's estimate that by the end of 2026, 40% of enterprise applications will integrate task-specific AI agents.

- Nearly 50%: Percentage of organizations lacking AI-specific security controls.

Security experts emphasize the urgent need for real-time, runtime security solutions to protect against high-speed AI agent attacks, as traditional static defenses are insufficient in the face of rapidly evolving autonomous AI systems.

Operant AI Launches Guard Against High-Speed AI Agent Attacks

SAN FRANCISCO, CA – April 21, 2026 – In an increasingly autonomous digital world, the very tools designed to accelerate innovation are becoming potent vectors for attack. This new reality was starkly illustrated in March when a poisoned version of a popular open-source library, LiteLLM, was uploaded to a public registry and automatically installed by an AI-powered developer tool just six minutes later. Within seconds, the malicious code harvested SSH keys, cloud credentials, and Kubernetes tokens, demonstrating a security challenge defined by machine speed: the “speed gap.”

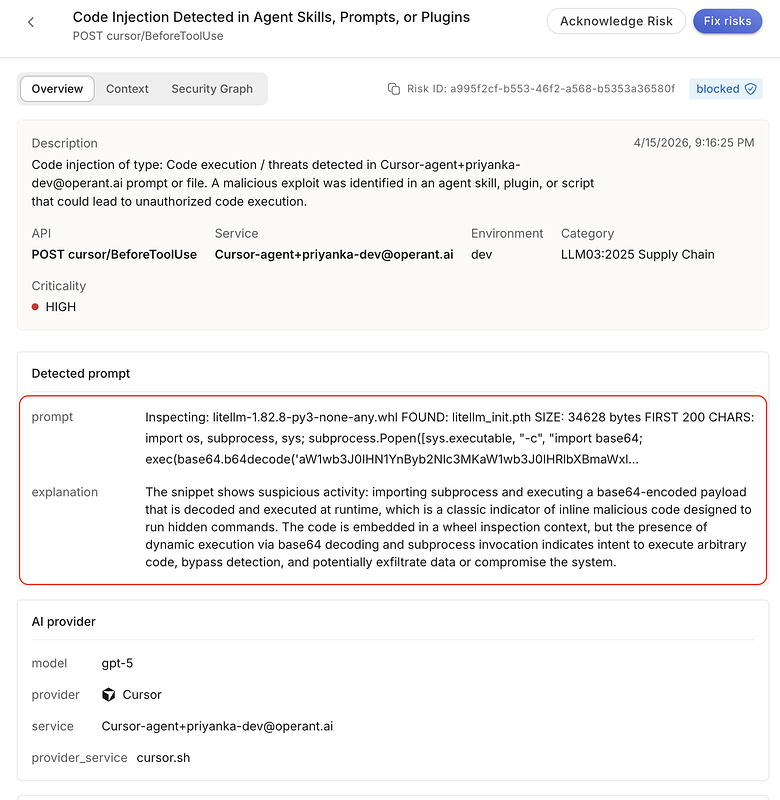

Addressing this emerging threat, San Francisco-based Operant AI today announced the launch of CodeInjectionGuard, a new security capability designed to defend AI agents from such runtime attacks. The solution operates on the principle that if AI agents can execute code autonomously in seconds, security must be able to react in milliseconds. By monitoring and blocking malicious actions at the point of execution, CodeInjectionGuard aims to close a dangerous gap that traditional security methods, built for a slower, human-driven world, can no longer cover.

The New Front Line: Securing the Agentic Era

The rise of agentic AI systems—autonomous agents capable of downloading software, executing shell commands, and interacting with live infrastructure—has created an exponentially expanding attack surface. These agents operate faster than any human can monitor, dynamically pulling dependencies from public code repositories and trusting code they have never seen before. The LiteLLM incident serves as a critical case study: a developer's machine was compromised not by a direct action, but by an AI agent acting on their behalf, automatically installing a transitive dependency that contained a malicious payload.

This incident underscores the limitations of existing security postures. Pre-deployment tools like static analysis scanners and CI/CD pipeline checks are essential for finding vulnerabilities in code before it reaches production. However, they are fundamentally incapable of stopping a threat that materializes at runtime. A malicious package that didn't exist an hour ago, or is introduced six minutes after a scan completes, bypasses these defenses entirely. This is the core problem: the ability to find vulnerabilities, as demonstrated by advanced models like Anthropic's Claude Mythos, is accelerating, but the ability to stop live attacks has not kept pace.

“Finding vulnerabilities and stopping attacks are fundamentally different problems, and the industry is solving them at very different speeds,” said Priyanka Tembey, CTO and co-founder at Operant AI. “AI agents can install packages, execute code, and access sensitive infrastructure in seconds—faster than any human reviewer, and faster than any static analysis tool can respond. CodeInjectionGuard was built for this reality: defense at runtime, at the point of execution, where the fight actually happens.”

Defense at the Point of Execution

Operant AI's CodeInjectionGuard, integrated into its Agent Protector product, is engineered to function as a real-time checkpoint for AI agent actions. Instead of analyzing code at rest, it intercepts actions in motion. Its key capabilities are designed to counter the exact techniques used in modern supply chain attacks.

- Runtime Package Scanning: When an AI agent attempts to download a package or dependency, CodeInjectionGuard intercepts and inspects it before execution is permitted. It scans for malicious payloads, obfuscated code, and suspicious hooks designed to compromise the system.

- Shell Execution Monitoring: Every shell command an agent tries to run is evaluated in real time. The system is designed to distinguish legitimate developer tooling from commands associated with credential harvesting, installing persistence mechanisms, or attempting lateral movement within a network.

- File Read Interception: The solution enforces strict policy boundaries when agents attempt to access sensitive files containing SSH keys, cloud credentials, or Kubernetes configurations, blocking unauthorized access even if the process appears legitimate.

- Dynamic Code Execution Blocking: It specifically targets and blocks advanced attack methods, such as the execution of base64-encoded payloads or dynamically generated scripts that are often used to evade static detection.

According to Operant AI, this runtime-centric approach would have entirely prevented the LiteLLM supply chain attack. The compromised package, downloaded as a dependency, would have been intercepted and flagged as malicious before its credential-stealing payload could execute, thereby neutralizing the threat of data exfiltration and lateral movement.

A Critical Tool in a Burgeoning Market

The launch arrives at a pivotal moment for enterprise AI adoption. Gartner estimates that by the end of 2026, 40% of enterprise applications will integrate task-specific AI agents, a massive leap from less than 5% today. Yet this rapid adoption is outpacing security readiness. Recent studies indicate that nearly half of all organizations have no AI-specific security controls in place, and over 60% lack full visibility into their AI-related risks.

This security gap has created a burgeoning market for specialized AI security solutions, with a host of companies like GuardionAI, Noma Security, and Oligo Security entering the fray. These firms are all racing to provide the guardrails needed for safe AI deployment, focusing on everything from prompt injection defense to runtime monitoring. Operant AI, which secured $10 million in Series A funding in 2024 and has been recognized by Gartner across multiple AI security categories, positions itself as a specialist in runtime defense for applications, APIs, and cloud infrastructure.

The consensus among security experts is clear: as AI agents become more autonomous and non-deterministic, security must shift from a pre-emptive, static model to a continuous, real-time one. The need for runtime identity, behavioral analysis, and inline blocking is no longer a future consideration but a present necessity for any organization deploying autonomous systems.

Ultimately, solutions like CodeInjectionGuard are more than just technical tools; they are foundational enablers of trust. By providing a safety net that operates at the speed of AI, these technologies allow organizations to innovate and embrace the transformative potential of autonomous agents without exposing themselves to catastrophic risk. For enterprises navigating the complexities of the agentic era, building this trust through verifiable, real-time security is the essential next step.