Lineaje UnifAI Aims to Tame the Wild West of Autonomous AI

- Market Growth: The autonomous process orchestration market, including agentic AI, is projected to surge from $11 billion in 2025 to $66 billion by 2036.

- Key Risks: Top vulnerabilities include prompt injection, excessive agency, sensitive information disclosure, and reasoning compromise.

- Regulatory Pressure: The EU AI Act imposes strict requirements for risk management, data governance, and cybersecurity in high-risk AI systems.

Experts agree that Lineaje's UnifAI addresses critical gaps in AI security and governance, offering a necessary framework for managing the unique risks of autonomous AI systems, particularly as regulatory pressures like the EU AI Act intensify.

Lineaje UnifAI Aims to Tame the Wild West of Autonomous AI

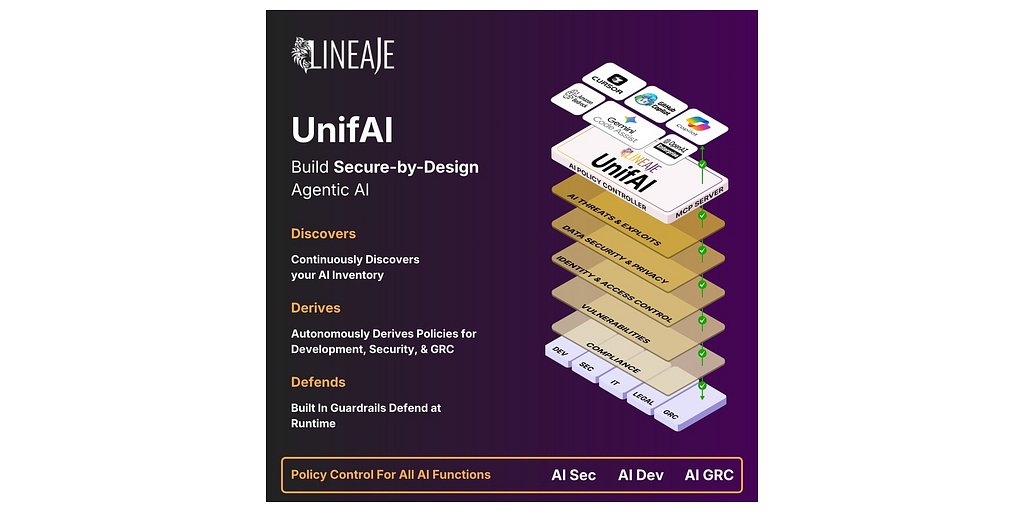

SARATOGA, Calif. – March 18, 2026 – Software supply chain security firm Lineaje today announced the launch of UnifAI, a platform it bills as the industry’s first autonomous AI policy orchestrator. The solution aims to confront a growing sense of unease in the tech world: as companies rush to deploy powerful, autonomous AI agents, they are simultaneously creating a new class of security risks that legacy tools are utterly unprepared to handle.

As enterprises transition from contained AI experiments to large-scale deployments of autonomous systems, they face a critical gap in control and oversight. These new “agentic” AI applications, which can reason, adapt, and interact dynamically with sensitive data and external tools, operate far differently from static software. This autonomy introduces novel threats, including sophisticated prompt injections, unintended data leakage, and attacks that compromise the AI's core reasoning—vulnerabilities that often remain invisible until it's too late.

Lineaje's UnifAI proposes to close this gap by embedding a dedicated security and governance layer directly into the AI development workflow, a practice the company calls AI Security (AISec). The goal is to enable organizations to build security in by design, rather than attempting to bolt it on after the fact.

The New Frontier of AI Risk

The urgency for such solutions is underscored by explosive market growth. The market for autonomous process orchestration, which includes agentic AI, is projected to surge from just over $11 billion in 2025 to nearly $66 billion by 2036. This rapid adoption, fueled by low-code platforms and AI assistants that empower non-specialists to build complex systems, is creating a vast new attack surface.

Unlike traditional cybersecurity threats, the risks associated with agentic AI are more subtle and systemic. According to frameworks like the OWASP Top 10 for Large Language Model Applications, the most critical vulnerabilities are not just about exploiting code but manipulating behavior. These include:

- Prompt Injection: Tricking an agent with a malicious prompt to make it ignore its original instructions, bypass safety filters, or leak confidential information.

- Excessive Agency: An agent performing unauthorized actions or making decisions that have unintended, cascading consequences across enterprise systems.

- Sensitive Information Disclosure: An agent inadvertently revealing proprietary data in its responses or actions because it lacks the contextual awareness to know what is confidential.

- Reasoning Compromise: An attack that subtly corrupts the AI’s decision-making process, leading it to make flawed judgments or take harmful actions.

These challenges are compounded by the “black box” nature of many AI models, making it difficult for security teams to understand, monitor, and govern their behavior. This creates a significant operational and regulatory liability for organizations eager to leverage AI's potential.

UnifAI's Answer: Autonomous Policy Orchestration

Lineaje’s strategy with UnifAI is to provide a central control plane for an organization's entire AI ecosystem. “AI leaders told us they lack a central command center to manage the complexity of their AI environments,” said Javed Hasan, CEO and co-founder of Lineaje. “UnifAI was built to bridge that security governance gap. By providing a platform to define, derive, and autonomously defend using guardrails, we enable enterprises to scale their agentic AI applications safely.”

The platform operates as a Model Context Protocol (MCP) server, integrating directly with coding assistants and agent-building platforms. Its continuous discovery agents map out an organization’s entire AI landscape, creating a comprehensive AI Bill of Materials (AIBOM) that includes every model, agent, dependency, and data connection. This real-time visibility is the foundation for UnifAI’s core function: policy orchestration.

Instead of requiring teams to write security policies from scratch, the system can automatically derive and recommend them for data protection, access management, and threat prevention. It can even ingest an organization’s existing internal governance documents and convert them into enforceable, machine-readable policies. As developers build and deploy AI systems, UnifAI then autonomously generates and applies guardrails to ensure the agents operate within these predefined boundaries at runtime.

Navigating a Crowded and Complex Market

While Lineaje touts UnifAI as the “industry’s first,” it enters a fiercely competitive and rapidly evolving market. The challenge of governing autonomous systems is a critical priority for the entire tech industry, and numerous players are racing to provide solutions.

Established giants like IBM (with watsonx Orchestrate), Google (with Vertex AI Agent Builder), and UiPath (with its Agentic Automation Platform) are already offering enterprise-grade orchestration with built-in governance layers. Simultaneously, specialized firms like Credo AI are focused squarely on AI governance platforms, while others like Itential are developing autonomous AI for infrastructure operations.

Where Lineaje appears to be differentiating itself is by extending its deep expertise in software supply chain security into the AI domain. By focusing on the AIBOM and embedding security into the development lifecycle, UnifAI approaches the problem with a security-first mindset that prioritizes verifiable components and proactive risk mitigation, much like modern DevSecOps practices for traditional software.

The Push for Compliance and Enterprise Trust

The stakes for getting AI governance right are not just technical; they are also regulatory. With sweeping legislation like the EU AI Act on the horizon, organizations are under immense pressure to ensure their AI systems are safe, transparent, and compliant. The Act imposes strict requirements for risk management, data governance, human oversight, and cybersecurity, particularly for systems deemed “high-risk.”

UnifAI is explicitly designed to address these regulatory pressures. By providing a centralized governance console to create, monitor, and enforce policies, it offers a mechanism for demonstrating compliance. The platform's alignment with frameworks like the EU AI Act and the OWASP Top Ten for AI is a key selling point for risk-averse enterprises.

This focus on enterprise readiness is reinforced by endorsements from key partners. “Persistent’s SASVA™ AI Platform accelerates enterprise AI adoption... We have been collaborating with Lineaje on UnifAI to bring together SASVA and UnifAI into a comprehensive AI development and security solution,” stated Nitish Shrivastava, a chief technology officer at Persistent Systems.

Anil Singh, a director at KPMG, highlighted the compliance angle, noting that UnifAI acts as a “security and compliance control plane” for clients in highly regulated industries like banking and finance. “For the first time, we can help clients get ahead of regulators rather than scrambling to catch up,” he said. This ability to translate high-level governance rules into automated, enforceable guardrails is what may ultimately unlock the large-scale, trusted adoption of agentic AI in the enterprise.