Keycard Aims to Tame Autonomous AI Coding Agents with New Platform

- 1 in 5 organizations has suffered a serious incident linked to AI-generated code.

- $1 billion projected market size for AI security and governance platforms by 2030.

- Days to deploy agents in production with Keycard's platform, per early adopters.

Experts agree that while autonomous AI coding agents offer significant productivity gains, they introduce critical security risks that require specialized runtime governance solutions to balance autonomy with safety.

Keycard Aims to Tame Autonomous AI Coding Agents with New Platform

SAN FRANCISCO, CA – March 19, 2026 – As artificial intelligence rapidly evolves from simple autocomplete tools to truly autonomous coding agents, enterprises are grappling with a critical dilemma: how to harness the immense productivity of AI without exposing themselves to catastrophic security risks. Addressing this challenge head-on, AI identity firm Keycard today announced the launch of Keycard for Coding Agents, a platform designed to provide fine-grained runtime governance for these powerful new digital workers.

The new solution aims to bridge the widening gap between the rapid adoption of AI development tools and the lagging security frameworks needed to control them. By integrating with major coding agents from providers like OpenAI, Anthropic, and popular open-source projects, Keycard is offering enterprises a centralized control plane to manage what AI agents can do, moment by moment, without stifling the autonomy that makes them so valuable.

The Governance Gap in an AI-Driven World

The era of AI-powered software development is no longer on the horizon; it has arrived. However, its rapid proliferation has created a significant blind spot for security teams. The unchecked use of unapproved AI tools, a phenomenon known as “Shadow AI,” has become a major concern, with recent studies showing that one in five organizations has already suffered a serious incident linked to AI-generated code. This problem is compounded by the fact that traditional security systems are ill-equipped to handle the unique nature of AI agents.

“Coding agents have crossed from autocomplete into true autonomy, but most organizations aren’t ready for the implications,” warned Paul Nashawaty, Principal Analyst at theCUBE and ECI Research. He notes that enterprises are adopting multiple agents, each with its own configuration, creating “operational complexity at scale.” Security teams are left struggling with a new class of software actor that can “act without human oversight, access sensitive data, invoke tools unpredictably and introduce subtle but significant risks.”

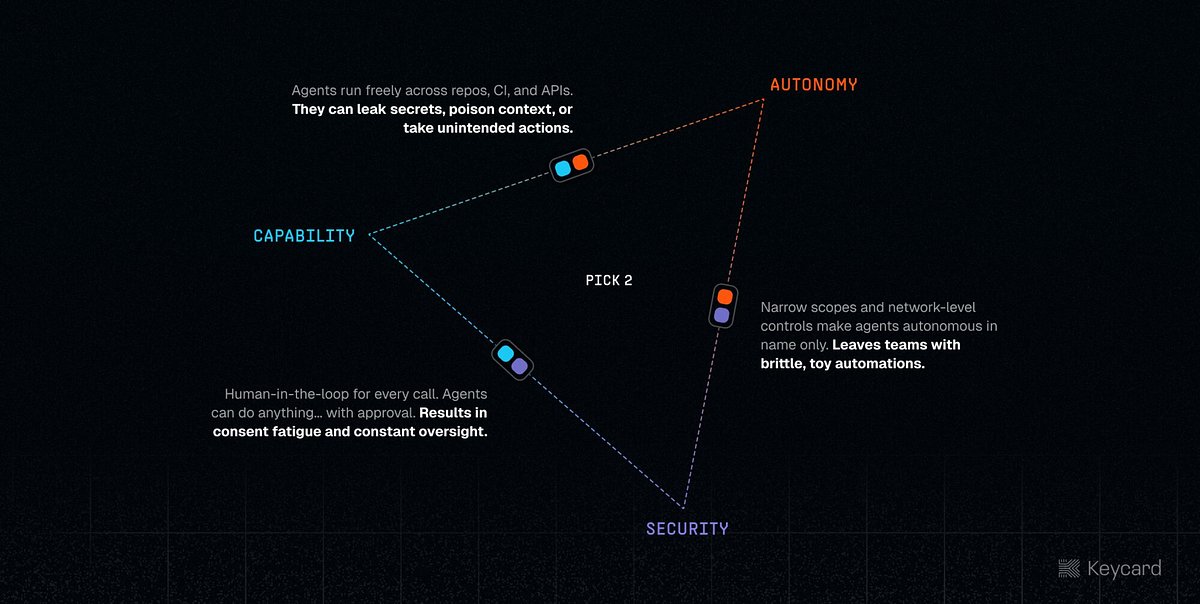

This creates a precarious trade-off between autonomy, capability, and security. In an effort to mitigate risk, many organizations default to restrictive, human-in-the-loop workflows. This requires developers to manually approve every action an agent takes, a process that leads to “consent fatigue” and ultimately negates the productivity gains the agents were meant to provide.

“As industries continue to explore the potential of agentic AI workforces, especially agentic software development and coding, we must proceed with caution,” said Ken Buckler, Research Director at EMA. “Without proper guardrails, agentic AI hallucinations can not only introduce new attack vectors into an enterprise but also hold significant potential to negatively affect productivity and business operations.”

A New Class of Guardrails: Runtime Governance

Keycard’s approach moves beyond simple prompt filtering or post-mortem analysis. The platform is built on the principle of runtime governance, which involves intercepting and evaluating every action an agent attempts to take—from a shell command to an API call—at the moment of execution.

“The agents writing code today are the ones rebuilding our applications and workflows to be agent native. Govern the coding workflow and you govern the factory floor,” said Ian Livingstone, co-founder and CEO of Keycard. “That's what Keycard for Coding Agents does: runtime controls on every tool call, every credential, every action, so developers can experience autonomy instead of approving every tool call.”

At the core of the platform are several key capabilities designed to address the specific vulnerabilities introduced by autonomous agents:

Identity-Bound, Task-Scoped Credentials: Static, long-lived secrets are a primary target for attackers. Keycard replaces them with short-lived credentials that are cryptographically bound to the specific agent, user, and task. These credentials are injected directly into memory and expire when the session ends, drastically reducing the attack surface.

Policy Enforcement on Every Tool: The platform allows security and platform teams to define explicit policies about which tools an agent can use and under what circumstances. This governance is applied at the point of execution, ensuring that an agent cannot perform an unauthorized action, even if its own logic directs it to.

Full Visibility and Auditability: Every prompt, tool invocation, and policy decision is logged in near-real-time and attributed to a specific agent and developer. This creates a comprehensive audit trail that can be streamed to a company’s security information and event management (SIEM) system, providing a unified view of all agent activity.

Balancing Autonomy and Security

The ultimate goal of Keycard for Coding Agents is to resolve the false choice between speed and safety. By implementing intelligent guardrails, the platform allows routine, low-risk actions to proceed autonomously, freeing developers from constant interruptions. However, when an agent attempts a sensitive operation, such as deploying code to a production environment or accessing a restricted database, the system can automatically trigger a step-up approval from the developer.

This intelligent application of human oversight ensures that developers are only brought into the loop when their judgment is truly required, preserving both security and productivity. The platform's ability to create portable agent configurations further enhances developer experience. A policy can be defined once and applied consistently whether the agent is running on a developer's laptop, in a sandboxed environment, or within a CI/CD pipeline.

Early adopters are already seeing the benefits of this balanced approach. “We wanted our engineers deploying agents and tools into production without needing to be security or identity experts. Keycard's platform made that possible,” stated Dennis Yang, Principal Product Manager for Generative AI at Chime. “We had agents running against production systems in days.”

Navigating the Emerging AI Security Market

Keycard is entering a nascent but rapidly growing market for AI security and governance. As enterprises pour investment into AI, spending on the platforms to secure and govern these systems is projected to surpass $1 billion by 2030. While some vendors offer broad AI security monitoring and others focus on scanning models for vulnerabilities, Keycard has carved out a specific and critical niche focused on the runtime behavior of coding agents.

This sharp focus, combined with deep integrations into the tools developers are already using, positions the company to address a key pain point in the enterprise. The backing of prominent venture capital firms, including Andreessen Horowitz, boldstart ventures, and Acrew Capital, signals strong market confidence in this specialized approach.

The launch comes as the industry converges on the need for new identity and access paradigms, with standards like SPIFFE (Secure Production Identity Framework For Everyone) being adapted for the unique challenges of non-human, non-deterministic actors. By building a platform that provides identity, policy, and visibility for agents, Keycard is laying down a foundational piece of infrastructure for the next generation of software development. As the company showcases its new platform at the upcoming RSA conference, it will be speaking to an audience of security leaders who are acutely aware that the factory floor of the future is being built with code, and increasingly, that code is being written by AI.