Comcast and Personal AI Ignite the Edge with Memory-Based AI Models

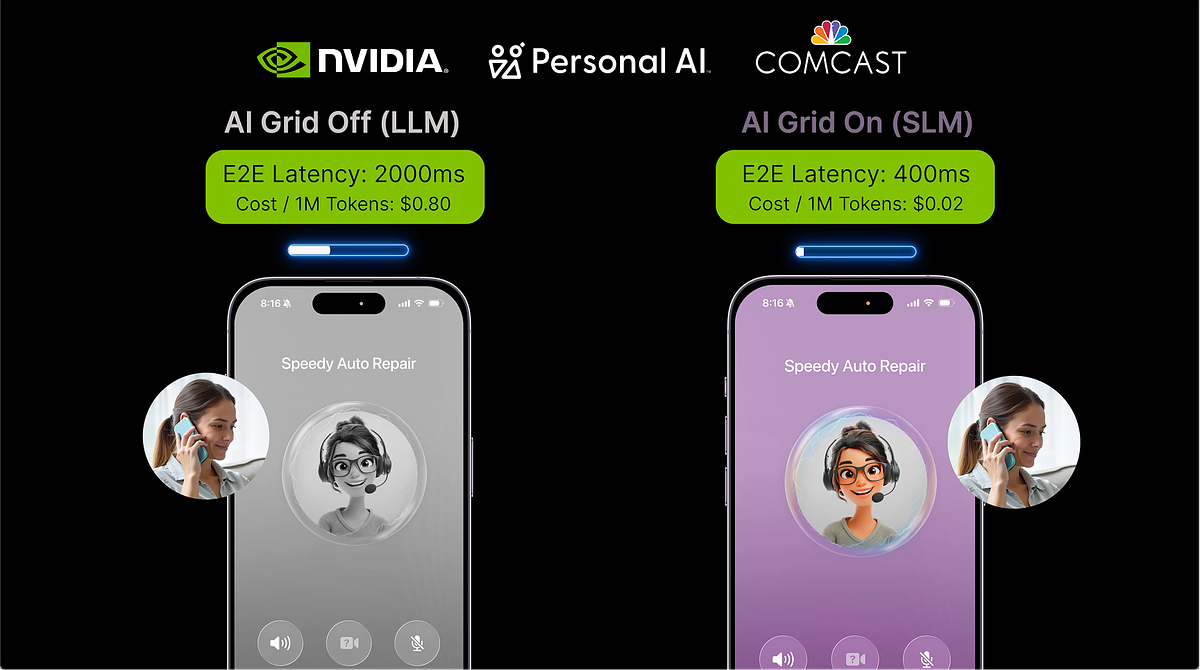

- 65 million homes and businesses: Comcast’s network reach, providing a foundation for distributed AI platform. - Under 500 milliseconds: Response times achieved by memory-based SLMs in trials. - Up to 40 times lower operating costs: Compared to traditional cloud deployments.

Experts view this collaboration as a pivotal shift toward distributed AI, enabling faster, more efficient, and privacy-focused services by leveraging edge computing and memory-based models.

Comcast and Personal AI Ignite the Edge with Memory-Based AI Models

SAN JOSE, CA – March 17, 2026 – A landmark collaboration announced today at NVIDIA GTC is set to redefine the landscape of artificial intelligence, moving it from distant cloud data centers to the edge of the network, right into our neighborhoods. Comcast, the telecommunications giant, is partnering with specialist firm Personal AI to deploy a new class of memory-based Small Language Models (SLMs) across its vast network, all accelerated by next-generation NVIDIA technology. This strategic initiative, dubbed the AI Grid, promises to unlock a new era of real-time, hyper-personalized digital experiences with dramatically lower latency and cost.

Trials conducted at Comcast’s Philadelphia headquarters have already validated the approach, demonstrating the technical and economic viability of distributing AI workloads. The partnership aims to solve some of the most persistent challenges facing large-scale AI deployment, paving the way for services that are not just intelligent, but intimately aware of user context and history.

Beyond the Cloud: The Dawn of the AI Grid

For years, the dominant model for AI has been the centralized cloud. Powerful, large language models (LLMs) residing in massive data centers have fueled the current AI boom, but this architecture has inherent limitations. The physical distance between the user and the data center introduces latency, creating delays that are unacceptable for real-time applications like responsive voice assistants or interactive gaming. Furthermore, the immense computational power required to run these LLMs, coupled with the cost of transferring vast amounts of data, makes scaling personalized services prohibitively expensive. Privacy also remains a significant concern, as sensitive user data must often travel to the cloud for processing.

The AI Grid framework, championed by Comcast and its partners, offers a revolutionary alternative. By distributing AI inference workloads across existing network infrastructure—from regional hubs to local edge sites—computing power is brought physically closer to the end-user. Comcast’s network, which reaches more than 65 million homes and businesses, provides a ready-made foundation for one of the largest distributed AI platforms in the United States.

“With the AI ecosystem rapidly evolving, there is a growing role for distributed compute to play in running real-time Edge AI applications that unlock faster, smarter and more responsive experiences for consumers and businesses,” said Elad Nafshi, Chief Network Officer at Comcast. This shift from a centralized to a distributed model is the key to overcoming the structural bottlenecks of the cloud, enabling a new wave of AI-native services that are both powerful and efficient.

The Power of Memory in AI

At the heart of this technological shift are the specialized models developed by Personal AI. Unlike the general-purpose LLMs that power most mainstream chatbots, Personal AI focuses on Small Language Models that incorporate a unique 'identity-based memory architecture.' This technology fundamentally changes how AI interacts with users, moving beyond the limitations of a short-term context window to build persistent, long-term memory.

“AI without memory will not win in this market,” stated Suman Kanuganti, CEO and Co-Founder of Personal AI. “Memory is what turns AI into personal intelligence.” His company’s technology is designed to encode user interactions and experiences, stabilize them into long-term memory traces, and integrate them directly into the AI’s neural architecture. The result is an AI that remembers context, learns from past conversations, and evolves over time—creating what Kanuganti calls an “AI of Things.” This approach dramatically reduces the risk of AI “hallucinations” by grounding the model in user-specific data, ensuring responses are not just creative but also accurate and relevant.

These memory-based SLMs are significantly smaller and more efficient than their cloud-based counterparts. Their compact size allows them to run on less powerful hardware, making them ideal for deployment at the network edge. In the Comcast trials, these models delivered response times under 500 milliseconds and demonstrated the potential for up to 40 times lower operating costs compared to traditional cloud deployments. This efficiency is achieved by eliminating the need to constantly re-feed the model with historical context for every single interaction.

A New Playbook for Telecom Giants

The collaboration provides a strategic blueprint for how telecommunication companies can monetize their extensive network infrastructure in the age of AI. Instead of merely acting as a conduit for data flowing to and from cloud data centers, telcos like Comcast can transform their networks into active, intelligent platforms for delivering high-value services. The AI Grid represents a significant new revenue opportunity, enabling the creation of premium offerings that rely on low-latency, high-concurrency processing.

Initial use cases being explored are both practical and ambitious. They include an advanced, real-time advertising engine that can deliver personalized video ads, and an AI-powered concierge for small businesses, helping them manage customer inquiries and appointments with a level of sophistication previously reserved for large enterprises. For consumers, this could manifest as premium, low-latency gaming tiers that offer unparalleled responsiveness by bringing NVIDIA GPU resources just milliseconds away from the player. This leverages NVIDIA's cutting-edge RTX PRO 6000 Blackwell Server Edition GPUs, which provide the necessary horsepower for cloud-level performance at the edge.

“We're at a generational inflection point where AI and telecommunications are converging on the same infrastructure, creating a distributed edge AI network perfectly suited for inference," noted Chris Penrose, Global VP of Business Development for Telco at NVIDIA. The success of the Philadelphia trials confirms that this vision is not just theoretical but a commercially viable strategy for delivering the next generation of digital services.

Personalization, Privacy, and the Path Forward

While the promise of hyper-personalized AI is compelling, it walks a fine line with user privacy. The AI Grid model offers a distinct advantage in this domain. By processing data locally at the network edge, sensitive personally identifiable information (PII) can remain within the secure confines of the local network, minimizing exposure and reducing the risk of large-scale data breaches. This privacy-first architecture is a critical selling point in an increasingly data-conscious world.

However, the very nature of an AI that 'remembers' and builds an identity-based profile raises new ethical questions. The scope of data collection, the transparency of how memories are used, and the mechanisms for user consent and control will become paramount. As this technology becomes more integrated into daily life—powering everything from smart home devices to in-car assistants and personalized healthcare monitors—establishing clear regulatory frameworks and industry standards will be essential to building and maintaining public trust.

The partnership between Personal AI, Comcast, and NVIDIA represents a pioneering step into this new frontier. It demonstrates a viable path to creating AI that is not only more powerful and efficient but also potentially more private and secure. How the industry navigates the accompanying ethical responsibilities will ultimately determine the success and societal impact of this powerful technology, setting the standard for how personalized intelligence is delivered at scale.