AI's Great Divide: New Report Reveals Voice Tech Fails the Global South

- 5.5 billion people in the Global South affected by AI voice tech underperformance

- 10.6% WER for ElevenLabs Scribe v2 (global), but third place for Vietnamese

- 93% semantic similarity preserved in Vietnamese despite high WER

Experts agree that current AI voice recognition benchmarks are misleading and fail to account for linguistic diversity, requiring a more nuanced, region-specific evaluation framework.

AI's Great Divide: New Report Reveals Voice Tech Fails the Global South

BENGALURU, India – May 13, 2026 – A groundbreaking report released today reveals that the world's leading artificial intelligence speech-recognition tools are significantly underperforming for more than 5.5 billion people across the Global South, exposing a critical flaw in the technology's global rollout. The report challenges the validity of industry-standard performance metrics and questions the billion-dollar deployment decisions being made by enterprises worldwide.

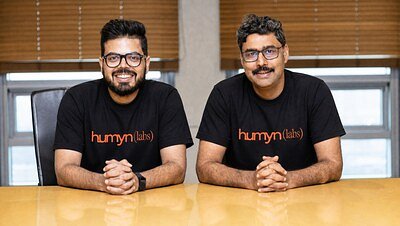

Humyn Labs, a Bengaluru-based AI data infrastructure company, published the BRIDGE (Benchmark of Regional & International Data for Global Evaluation) report, the largest independent benchmark of its kind. The study tested 15 top commercial AI models against real-world conversational data from 22 non-English languages, including diverse Latin American Spanish dialects, Brazilian Portuguese, and Vietnamese. The findings are stark: the AI industry's self-reported accuracy scores, often based on English-centric data, are dangerously misleading.

The Illusion of Global Accuracy

The core revelation from the BRIDGE report is that a high global ranking for an AI voice model means little when applied to a specific regional context. The study found that ElevenLabs Scribe v2, which led the pack with an overall word error rate (WER) of 10.6%, fell to third place for Vietnamese. In that language, the top performer was AssemblyAI Universal—a model that ranked a lowly 12th in the overall global benchmark.

This discrepancy highlights a systemic problem that Humyn Labs' co-founders argue has been ignored for too long. Enterprises are pouring vast sums into AI solutions based on performance leaderboards that don't reflect the linguistic reality of their target markets.

"The models are grading their own work," said Manish Agarwal, Co-founder of Humyn Labs, in the press release. "ASR providers published their own accuracy scores using benchmarks built on English-first, internet-trained datasets, with little independent validation. Meanwhile, enterprises are making million-dollar deployment decisions on numbers that rarely reflect how their users in the Global South actually speak."

Before the BRIDGE report, Agarwal noted, "there was no independent benchmark for real-world conversational audio across non-English markets." This lack of independent oversight has created a market where the top-ranked tools are not always the right tools, especially in the regions where voice AI adoption is projected to grow the fastest.

Beyond Word Error Rate: A New Standard for Evaluation

The report's authors contend that the problem lies not just with the AI models, but with the outdated methods used to evaluate them. Traditional benchmarks have overwhelmingly relied on Word Error Rate (WER), a simple metric that counts incorrect words. The BRIDGE report introduces a far more sophisticated seven-metric scoring stack designed for the complexities of human conversation.

This advanced evaluation includes metrics such as:

* Semantic Similarity: Measures whether the transcription preserves the meaning of the original speech, even if the exact words are wrong.

* Code-Switch F1: Assesses the model's ability to handle "code-switching," the common practice of mixing languages within a single conversation, like "Hinglish" (Hindi-English) in India.

* Loan Word WER: Focuses specifically on the transcription of borrowed words, a frequent occurrence in global languages.

The value of this nuanced approach is clear. In the case of Vietnamese, the report found that even models with a high word error rate still managed to preserve over 93% of the conversation's original meaning. For a business deploying a customer service bot, understanding the user's intent is far more critical than a perfect word-for-word transcript.

"The models aren't the only problem—the metrics are," stated Ishank Gupta, Co-founder of Humyn Labs. "You cannot evaluate non-english speech with a scoring system designed for English phonology and call it rigorous. The performance leaderboard for Spanish is not the leaderboard for Vietnamese. A single aggregate benchmark score cannot support cross-regional deployment decisions."

Crucially, the data used for the benchmark was not scraped from the internet or based on scripted readings. Instead, Humyn Labs collected real, two-person conversations across multiple regions, capturing the natural cadence, accents, and interruptions of everyday speech. The full dataset has been made available on the open-source platform Hugging Face, allowing for independent verification and further research.

The Billion-Dollar Cost of Misunderstanding

The implications of these findings extend far beyond academic circles. For businesses expanding into emerging markets, the report is a critical warning. Deploying a voice AI system that cannot accurately understand local dialects in a contact center in Mexico City or a healthcare app in Jakarta is not just inefficient—it's a recipe for failure. It can damage brand reputation, alienate customers, and lead to significant financial losses.

The reliance on flawed, English-centric benchmarks has created a "deployment gap" where AI that works perfectly in a Silicon Valley lab fails spectacularly in the real world. This is particularly acute in regions like India, where voice is becoming the primary user interface for hundreds of millions of new internet users. If an AI assistant cannot comprehend the nuances of Bengali, Tamil, or the myriad of other local languages and dialects, its utility is fundamentally limited.

By providing a more accurate and context-aware evaluation tool, the BRIDGE report aims to empower businesses to make smarter technology investments. It allows them to look past marketing claims and select ASR models that are proven to work for their specific target audience, potentially unlocking vast, underserved markets and avoiding costly implementation errors.

A New Player Challenging the AI Establishment

The company behind this disruptive report, Humyn Labs, is positioning itself as a key player in building the foundational infrastructure for the next generation of AI. Based in Bengaluru, the heart of India's tech industry, the firm has largely been self-funded through its own revenue, recently reaching an annualized run rate of $4 to $5 million.

The company has already committed $20 million of its own capital to expand its data collection infrastructure, not just for voice, but for "physical AI"—the intelligence that will power robots and other embodied systems. This strategic focus on collecting "egocentric, source-first data" from regions across Latin America, Southeast Asia, and the Middle East underscores their belief that the future of AI cannot be built on data scraped from the Western internet.

Their work on the BRIDGE report is a clear demonstration of this philosophy. By investing in the creation of a high-quality, independent benchmark for the Global South, Humyn Labs is not just critiquing the current state of AI; it is actively building the tools to fix it. This move establishes them as a credible and serious force, challenging the established AI giants to improve their models and be more transparent about their true capabilities. The report serves as both a public good and a powerful demonstration of the company's expertise in navigating the complex data landscape outside of North America and Europe.

As AI becomes more integrated into the fabric of global society, ensuring it works for everyone is not just a technical challenge but an ethical imperative. The BRIDGE report provides a crucial reality check, forcing the industry to confront its linguistic biases and begin the necessary work of building a more inclusive and equitable AI ecosystem. It suggests that the path forward requires a fundamental shift away from a one-size-fits-all approach and toward a future where technology is built to understand the rich diversity of human language.

📝 This article is still being updated

Are you a relevant expert who could contribute your opinion or insights to this article? We'd love to hear from you. We will give you full credit for your contribution.

Contribute Your Expertise →