Ant Group's Robbyant Aims to Shatter Robot Vision's Glass Ceiling

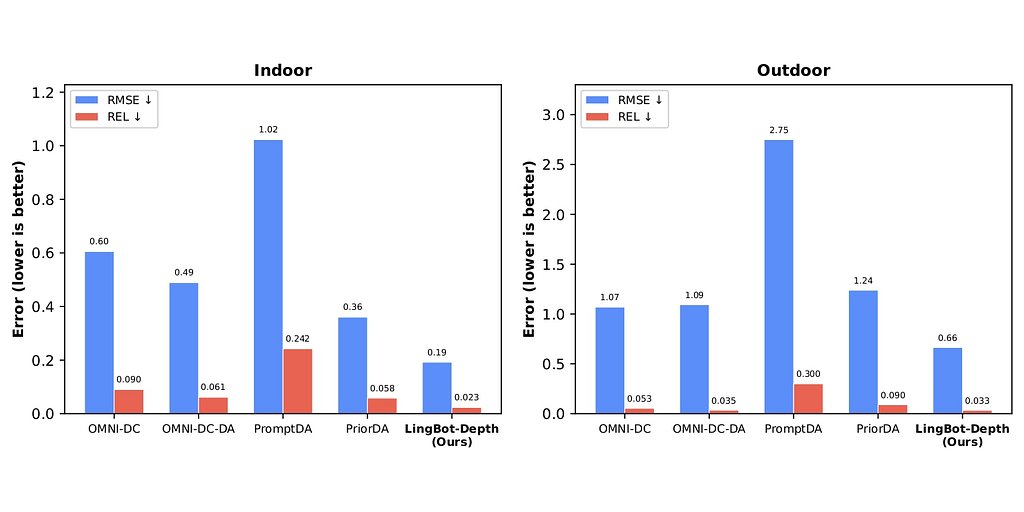

- 70% reduction in relative error in indoor scenes compared to existing models

- 47% error reduction in sparse Structure-from-Motion (SfM) tasks

- 0% success rate for robots grasping transparent objects before LingBot-Depth

Experts agree that LingBot-Depth represents a significant breakthrough in robotic perception, particularly for transparent and reflective surfaces, accelerating the safe deployment of robots in real-world environments.

Ant Group's Robbyant Aims to Shatter Robot Vision's Glass Ceiling

SHANGHAI, CN – January 27, 2026 – Robbyant, the embodied AI subsidiary of tech giant Ant Group, has open-sourced a new artificial intelligence model that tackles one of the most persistent and dangerous blind spots in robotics: the inability to accurately perceive transparent and reflective surfaces. The model, named LingBot-Depth, promises to dramatically enhance how robots see and interact with the world, a critical step toward their safe and reliable deployment in homes, factories, and hospitals.

In a move signaling a major push to build an open ecosystem for robotics, Robbyant also announced a strategic partnership with Orbbec, a leading provider of 3D vision hardware. The collaboration will see LingBot-Depth integrated directly into Orbbec's next-generation depth cameras, creating a powerful fusion of cutting-edge software and specialized hardware designed to give robots unprecedented visual acuity.

This dual announcement represents a significant development in the field of embodied AI, addressing a fundamental challenge that has long limited the capabilities and safety of autonomous systems. By making the technology freely available, Ant Group is betting that an open, collaborative approach is the fastest way to accelerate the future of robotics.

The “Glass Ceiling” of Robotic Perception

For years, a common but vexing problem has plagued robot developers. While a human can easily spot a glass door or a mirrored wall, for a robot, these surfaces are often invisible. Standard depth-sensing cameras, which typically use infrared light or stereo vision to map a 3D environment, fail when confronted with transparent or highly reflective materials. The light they project is either passed through or scattered unpredictably, resulting in gaping holes or severe noise in the robot's perception data.

This limitation is not a minor inconvenience; it's a critical safety and operational failure point. A warehouse robot might fail to see a clear plastic tote, causing a costly picking error. A home-assistance robot could collide with a sliding glass door, posing a risk to itself and its surroundings. In practical tests, the challenge is stark: prior to using LingBot-Depth, a robot's success rate at grasping a transparent storage box was literally zero. For a common steel cup, the rate was a mere 65%, demonstrating the difficulty even with less extreme reflections.

This “glass ceiling” of perception has effectively confined many advanced robots to highly controlled environments, free of the visual chaos of the real world. To move from the factory floor to the family living room, robots need to overcome this sensory deficit.

Inside LingBot-Depth: How Masked Modeling Creates Clarity

Robbyant’s solution is LingBot-Depth, a model built on a clever technique called Masked Depth Modeling (MDM). Instead of just trying to fix corrupted sensor data, the MDM approach trains the AI to become an expert at inferring 3D shapes from 2D visual cues. During training, the system is fed RGB camera images paired with depth data, but parts of the depth map are intentionally hidden or “masked.”

This process forces the model to learn the relationship between an object's visual appearance—its texture, contours, lighting, and context—and its physical, three-dimensional shape. It learns that the faint reflections on a surface might indicate glass, or that the continuous lines of a window frame imply a flat, transparent plane connecting them. When deployed in the real world, LingBot-Depth can then use this learned intuition to intelligently reconstruct missing depth information, filling in the blanks left by the sensor's physical limitations.

The results are striking. On established academic benchmarks like NYUv2 and ETH3D, LingBot-Depth drastically outperforms existing mainstream solutions. It achieved a relative error reduction of over 70% in indoor scenes compared to models like PromptDA and PriorDA. On the notoriously difficult sparse Structure-from-Motion (SfM) task, it reduced errors by approximately 47%. These figures, professionally certified by Orbbec's Depth Vision Laboratory, represent a significant leap forward in depth estimation accuracy and reliability.

A Strategic Alliance of Software and Silicon

While LingBot-Depth is compatible with a range of existing cameras, its partnership with Orbbec underscores a crucial industry trend: the deep integration of AI software and the hardware it runs on. Orbbec, a major player in the 3D vision market with a history of collaboration with tech leaders like Microsoft and NVIDIA, provides the specialized “eyes” for Robbyant's AI “brain.”

The model was co-optimized and trained using data from Orbbec’s Gemini 330 stereo 3D cameras. These cameras, powered by Orbbec's proprietary MX6800 depth engine chip, provide high-quality, chip-level raw depth data. This close coupling allows LingBot-Depth to access a richer, more stable data foundation than is typically available from off-the-shelf sensors.

"Robbyant's work in spatial intelligence models and algorithms complements Orbbec's expertise in 3D vision chips and robotic vision systems," said Len Zhong, Head of Product Management at Orbbec. "In this collaboration, the chip-level depth data provided by the Gemini 330 delivers a stable, high-fidelity, and physically grounded data foundation for the LingBot-Depth model. It's a great example that demonstrates close coupling between a robot's sensing hardware and its perception intelligence."

This synergy of software and silicon is designed to give developers a powerful, out-of-the-box solution that accelerates the development of more capable and reliable robots.

Ant Group's Vision for an Embodied AI Future

The launch of LingBot-Depth is more than just a technical achievement; it's a clear indicator of Ant Group's strategic ambitions. The fintech giant is aggressively diversifying into frontier technologies, with embodied AI as a core pillar. Robbyant's mission is to develop the foundational models and hardware for the next generation of intelligent devices, from robotic companions for elderly care to assistants for complex household tasks.

By open-sourcing LingBot-Depth and its plans to release a massive, high-quality dataset of 2 million RGB-depth pairs, Ant Group is making a statement. This approach, part of its broader "InclusionAI" initiative, stands in contrast to the more closed, proprietary ecosystems of some competitors in the robotics space, such as Boston Dynamics or Tesla. The strategy is to foster a vibrant community and accelerate innovation by lowering the barrier to entry.

"Reliable 3D vision is critical to the advancement of embodied AI," noted Zhu Xing, Chief Executive Officer of Robbyant. "By open-sourcing LingBot-Depth and collaborating with hardware pioneers like Orbbec, we aim to lower the barrier to advanced spatial perception and accelerate the adoption of embodied intelligence across homes, factories, warehouses, and beyond."

The release of LingBot-Depth, alongside other foundational models, is a key step in Ant Group’s long-term vision of integrating AI into the physical world. By equipping robots with the ability to see and understand complex human environments, this technology paves the way for systems that can provide meaningful assistance in daily life, transforming industries and enhancing human capability.