Ant Group's LingBot-Map Sets New Bar for Real-Time 3D Vision

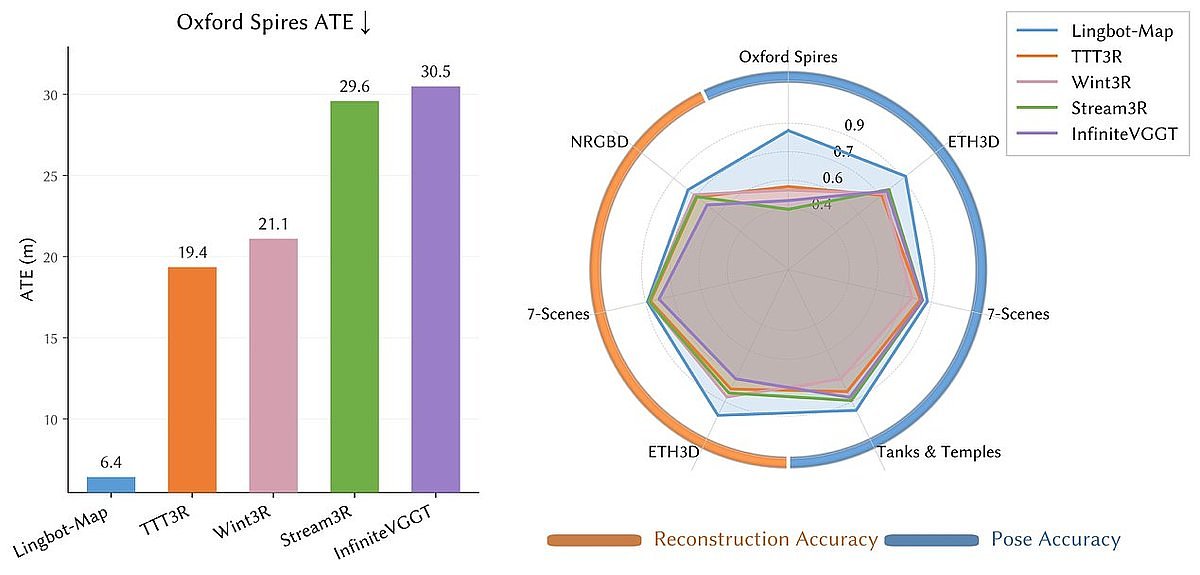

- Absolute Trajectory Error (ATE): 6.42 meters on the Oxford Spires dataset, a 2.8-fold improvement over previous methods.

- F1 Score: 98.98 on the ETH3D benchmark, 21 percentage points higher than the next-best method.

- Processing Speed: Approximately 20 frames per second (FPS) with stability over sequences exceeding 10,000 frames.

Experts would likely conclude that LingBot-Map represents a significant advancement in real-time 3D vision technology, setting a new benchmark for spatial intelligence in robotics, autonomous vehicles, and augmented reality.

Ant Group's LingBot-Map Sets New Bar for Real-Time 3D Vision

SHANGHAI, April 16, 2026 – Robbyant, the embodied AI division of Chinese technology giant Ant Group, has released and open-sourced LingBot-Map, a groundbreaking model that enables machines to understand and reconstruct their physical surroundings in real-time 3D using only a standard RGB camera. The move signals a significant leap forward in spatial intelligence, promising to accelerate innovation in robotics, autonomous vehicles, and augmented reality.

Unlike traditional 3D reconstruction techniques that require processing a complete set of images offline, LingBot-Map operates on a streaming, “see-as-you-go” basis. It continuously builds a detailed 3D map of its environment while simultaneously tracking its own position, frame by frame. This capability for online spatial awareness is critical for any intelligent system that needs to navigate and interact with the dynamic, unstructured human world.

A New Benchmark in Spatial Perception

The performance metrics released by Robbyant position LingBot-Map as a new leader in its class. On the notoriously challenging Oxford Spires dataset, a large-scale outdoor benchmark known for its difficult lighting and complex geometry, the model achieved an Absolute Trajectory Error (ATE) of just 6.42 meters. This figure represents a nearly 2.8-fold improvement in trajectory accuracy over the previous top-performing streaming method. Notably, LingBot-Map also outperformed established offline methods like DA3 (12.87 meters ATE) and VIPE (10.52 meters ATE), which have the advantage of processing all data at once.

This superior performance extends across a suite of major international benchmarks. On the ETH3D benchmark, which evaluates 3D reconstruction quality, LingBot-Map achieved a staggering F1 score of 98.98, more than 21 percentage points higher than the next-best method. The model also demonstrated leading results on the 7-Scenes and Tanks and Temples datasets, solidifying its state-of-the-art status in both pose estimation and reconstruction fidelity.

At the heart of this achievement is a novel architecture built on a Geometric Context Transformer. The key innovation, a mechanism called Geometric Context Attention (GCA), allows the model to efficiently manage and recall geometric information over time. Inspired by the hierarchical data management of classic SLAM (Simultaneous Localization and Mapping) algorithms, the GCA mechanism integrates an anchor context for coordinate grounding, a local window for fine geometric detail, and a long-term trajectory memory to correct for drift. This pure auto-regressive approach allows LingBot-Map to achieve both high accuracy and remarkable efficiency, processing video at approximately 20 frames per second (FPS) and maintaining stability over sequences exceeding 10,000 frames without significant accuracy degradation.

Ant Group's Strategic Open-Source Play

The decision to open-source LingBot-Map is a calculated move within Ant Group's broader strategy to build a foundational technology stack for embodied AI. Robbyant has been steadily releasing a suite of interconnected “LingBot” models, each tackling a different piece of the embodied intelligence puzzle. LingBot-Map fills what the company identified as a critical gap: the ability to create a stable, real-time 3D model of the world from continuous sensory input.

This new model works in synergy with Robbyant's previous releases. It complements LingBot-Depth, a model designed for high-precision depth perception, especially on challenging surfaces like glass. It provides the crucial spatial context for LingBot-VLA (Vision-Language-Action) models, which enable robots to understand and execute commands. Furthermore, the 3D reconstructions from LingBot-Map can serve as geometrically consistent training data or validation environments for LingBot-World, the company's generative world model that simulates interactive environments.

By releasing this powerful tool under an open-source license on platforms like GitHub and Hugging Face, Ant Group is aiming to cultivate a global developer ecosystem. This strategy seeks to democratize access to state-of-the-art spatial AI, lowering the barrier to entry for startups, researchers, and academic institutions. The move positions Robbyant not just as a technology provider, but as a central player in a growing community, challenging established commercial platforms like Google's ARCore and Apple's ARKit, which often operate within more closed ecosystems.

Transforming Robotics and Augmented Reality

The practical implications of LingBot-Map's capabilities are vast and could redefine what is possible for autonomous systems. For robotics, the model's accuracy and real-time performance translate directly to more robust navigation and safer operation in complex, dynamic spaces. Robots equipped with this technology can build maps of unfamiliar environments on the fly, avoid obstacles with greater reliability, and perform complex manipulation tasks that require a precise understanding of 3D space.

In the autonomous vehicle sector, LingBot-Map offers a powerful and cost-effective perception solution. While high-end autonomous systems rely on expensive LiDAR and radar sensors, this model's ability to generate highly accurate 3D data from simple RGB cameras presents an opportunity to create a redundant, vision-based safety layer or enhance the capabilities of more affordable systems.

For the burgeoning fields of augmented and virtual reality (AR/VR), LingBot-Map provides the foundational layer for true immersion. AR applications depend on a device's ability to understand the geometry of the real world to anchor virtual objects realistically. The model's real-time, high-fidelity mapping can enable more stable and interactive AR experiences, where digital content seamlessly blends with the physical environment. It also opens the door for rapid content creation, allowing developers to scan and digitize real-world spaces with unprecedented ease.

The launch of LingBot-Map comes at a time when the industry is moving towards end-to-end Geometric Foundation Models (GFMs) that predict 3D structure in a single forward pass. Robbyant's entry stands out for its performance, efficiency, and accessibility. By placing this advanced capability into the hands of the open-source community, Ant Group is not only showcasing its technical prowess but is also making a significant investment in accelerating the shared future of embodied AI.