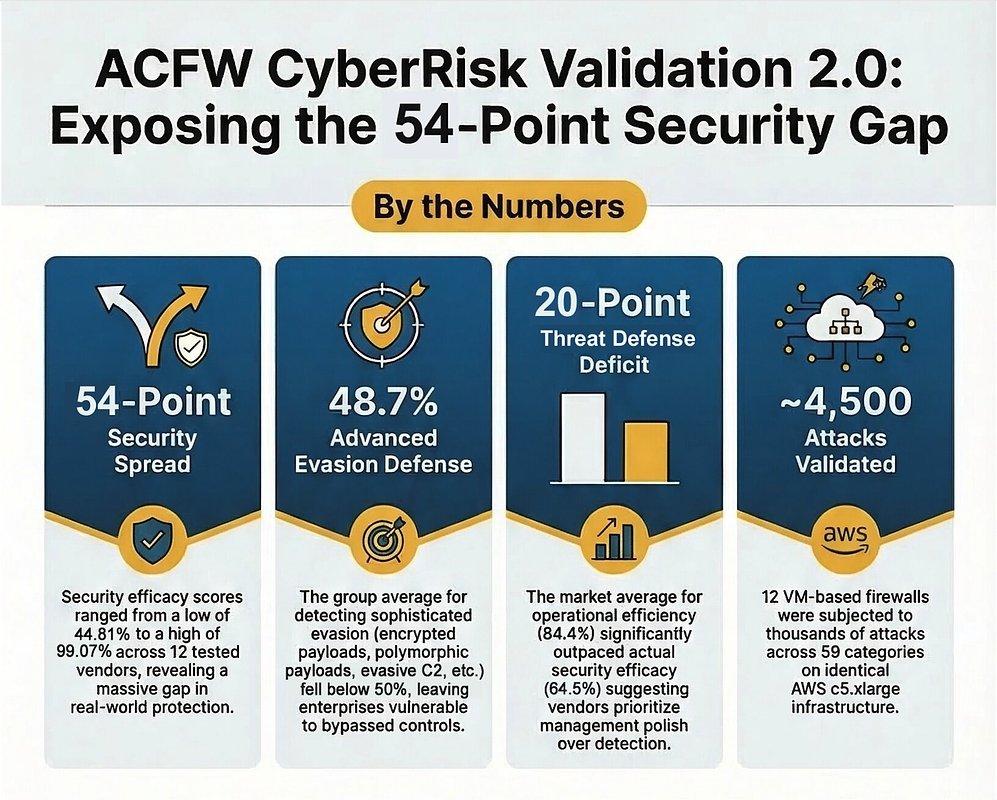

Cloud Firewalls Have a 54-Point Security Gap, Landmark Study Finds

- 54-point spread in security efficacy scores among leading cloud firewalls

- 48.73% average failure rate against advanced evasion techniques

- 64.5% average security efficacy vs. 84.4% operational efficiency

Experts emphasize the critical need for independent validation of cloud firewall performance, as real-world efficacy varies widely and often falls far short of vendor claims, particularly against sophisticated threats.

Cloud Firewalls Have a 54-Point Security Gap, Landmark Study Finds

AUSTIN, TX – March 19, 2026 – A landmark study has revealed a startling and dangerously wide gap in the effectiveness of commercial cloud firewalls, suggesting that many enterprises investing heavily in cloud security may be left with a false sense of protection. Independent testing laboratory SecureIQLab today published findings from its ACFW CyberRisk Validation 2.0 program, which discovered a staggering 54-point spread in security efficacy scores among 12 of the market's leading advanced cloud firewalls.

The comprehensive validation, which subjected the products to over 4,500 attacks, found that security efficacy ranged from a low of 44.81% to a high of 99.07%. The results expose a critical disparity between the marketing claims of vendors and the real-world performance of their products when faced with sophisticated cyber threats, indicating that enterprises choosing a solution without independent data are, as the report notes, "flying blind."

The Illusion of Security: A 54-Point Reality Check

At the core of the report is the dramatic variance in performance. While all 12 vendors market their products as 'advanced' security solutions, the empirical data tells a different story. The test, conducted on identical AWS cloud infrastructure to ensure a level playing field, evaluated the firewalls across four key pillars: threat defense, compliance, operational capabilities, and resiliency.

While the products performed well in policy enforcement, with compliance scores averaging a robust 94.3%, their ability to stop actual threats showed profound weakness. The most alarming finding was the collective failure to defend against advanced evasion techniques - methods specifically designed by attackers to bypass security controls. The group average for this crucial category plummeted to just 48.73%, meaning that, on average, more than half of these sophisticated attacks successfully slipped past the firewalls undetected.

"The data shows a market that has matured unevenly," stated David Ellis, VP of Research and Corporate Relations at SecureIQLab, in the announcement. "When the average score for advanced evasion defense is below 50%, enterprises are making procurement decisions with a significant blind spot. That is the problem independent validation exists to solve."

This gap between compliance and true threat defense highlights a critical risk. A firewall may successfully enforce company policies, such as blocking access to certain websites, but still fail to detect and block a malicious payload hidden within encrypted traffic, effectively leaving the door open for attackers.

The Sophisticated Threat: Why Modern Firewalls Are Failing

The report's findings on evasion techniques offer a sobering look into the capabilities of modern cyber adversaries and the lagging defenses of some security products. These are not basic, brute-force attacks; they are the subtle and insidious methods used by advanced persistent threats (APTs) and organized cybercrime groups.

The validation tested 52 distinct attack techniques across 17 evasion categories. These included:

- Encrypted Payloads: Malicious code hidden within SSL/TLS-encrypted traffic, which many firewalls fail to inspect properly.

- Living-off-the-Land (LotL) Techniques: Using a target's own legitimate system tools and processes to carry out an attack, making malicious activity difficult to distinguish from normal operations.

- Polymorphic Payloads: Malware that constantly changes its code to avoid detection by signature-based security tools.

- Evasive Command and Control (C2): Hiding communication between an infected system and the attacker's server by using common cloud services or encrypting the channel.

Experts note that the ability to defeat these techniques is the true measure of an 'advanced' security solution. The failure of the average firewall in the test to stop more than half of them suggests that many products are not equipped for the current threat landscape, where attackers assume security controls are present and engineer their attacks specifically to circumvent them.

Polish Over Protection? The Operational Efficiency Dilemma

Perhaps one of the most revealing insights from the SecureIQLab report is the disparity between how easy the products are to manage and how well they actually protect a network. The group average for operational efficiency was a strong 84.4%, while the average for security efficacy was a much lower 64.5%. This nearly 20-point gap suggests a market trend where vendors may be prioritizing a polished user interface and ease of management over the core, complex work of threat detection.

In the rapidly growing cloud firewall market, projected to expand at over 17% annually, vendors are competing to reduce the complexity of managing security across multi-cloud and hybrid environments. A slick, intuitive management console is a powerful selling point for overburdened IT teams. However, the data indicates this focus on 'management polish' may come at the cost of investment in the underlying security engine.

This trend raises critical questions for product development and procurement. While operational efficiency is important, it cannot be the primary metric for a security tool. An easily managed firewall that fails to stop advanced threats is ultimately a costly and dangerous liability.

The Call for Independent Validation

SecureIQLab's report serves as a powerful argument for the role of independent, transparent, and rigorous testing in the cybersecurity industry. To ensure the credibility of its explosive findings, the lab operated under the strict guidelines of the Anti-Malware Testing Standards Organization (AMTSO).

"When results vary this widely, the first question enterprises should ask is whether the testing methodology was applied equally to every product," said John Hawes, AMTSO COO, commenting on the process. "The AMTSO Standard exists to answer that question. Every detail of this methodology is published, every vendor was tested under identical conditions, and anyone can examine the process. That is how independent validation earns trust."

The test was entirely funded by SecureIQLab and was non-commissioned, meaning vendors did not pay to be included, nor could they influence the testing process or the publication of the results. For enterprise security leaders, the message is unequivocal: vendor datasheets and marketing claims are not enough. In a market where performance can vary by more than 50 points, empirical, third-party data is no longer a luxury but an essential component of due diligence. Making critical security decisions without it is a gamble that few organizations can afford to lose.